Loading collection...

Model Optimization Vocabulary

Techniques for making models faster and more efficient

All 7 Words

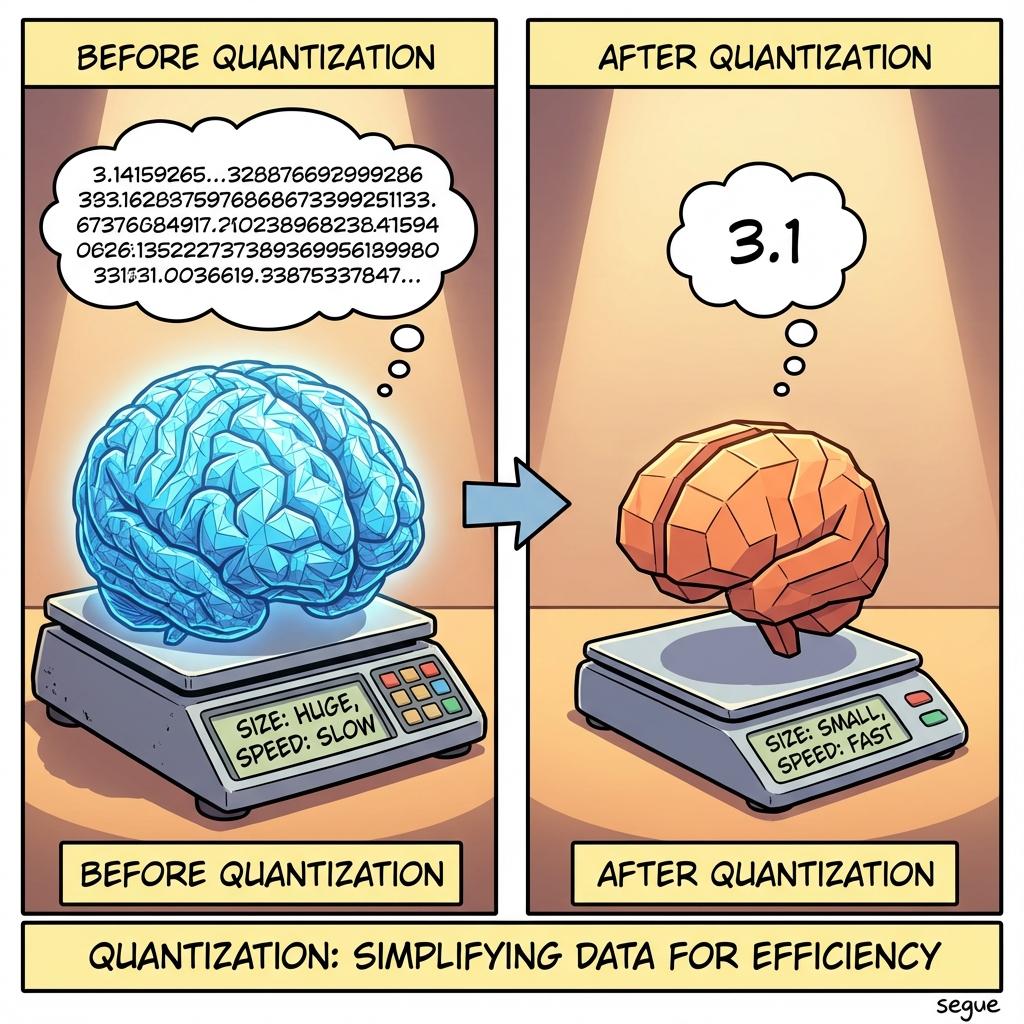

quantization

/ˌkwɒntɪˈzeɪʃən/reducing the precision of model weights (e.g., to 4-bit) to save memory

“4-bit quantization allows running a 70B model on a single GPU.”

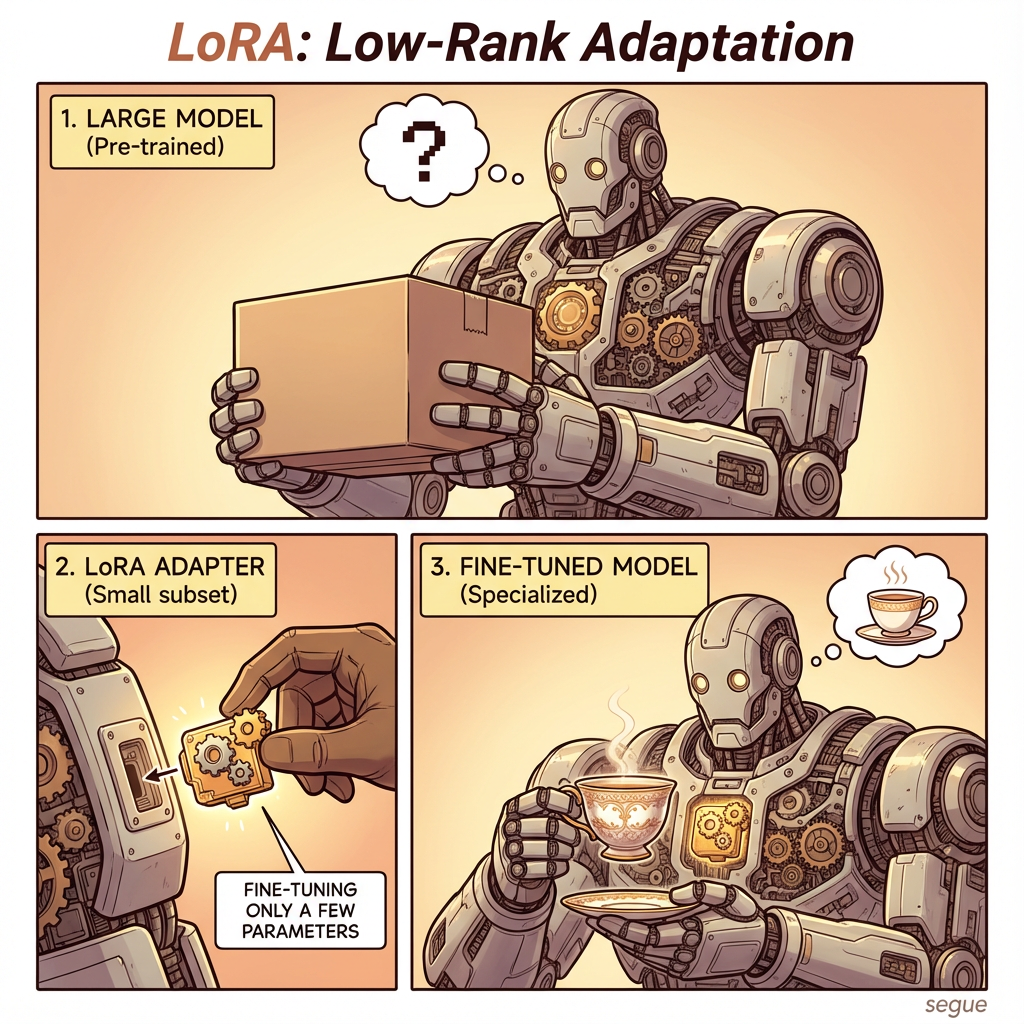

LoRA

/ˈlɔːrə/Low-Rank Adaptation; fine-tuning only a small subset of parameters

“LoRA makes fine-tuning large models computationally affordable.”

distillation

/ˌdɪstɪˈleɪʃən/training a smaller 'student' model to mimic a larger 'teacher' model

“Distillation produced a small model with near-GPT-4 performance on specific tasks.”

Mixture of Experts

/ˈmɪkstʃər əv ˈekspɜːrts/using multiple specialized sub-models (experts) and routing tokens to them

“Mixture of Experts (MoE) scales capacity without increasing inference cost.”

speculative decoding

/ˈspekjələtɪv diˈkoʊdɪŋ/using a small model to draft tokens for verification by a large model

“Speculative decoding doubled the inference speed without losing quality.”

KV cache

/ˌkeɪ ˈviː ˌkæʃ/storing attention calculations to speed up generation

“Optimizing the KV cache usage reduced memory footprint significantly.”

context caching

/ˈkɒntekst ˌkæʃɪŋ/saving the processed state of a prompt prefix to avoid recomputing it

“Context caching is ideal for chatting with long documents.”

More from Artificial Intelligence

Explore other vocabulary categories in this collection.