Loading collection...

Generative AI Vocabulary

Terms related to generative AI and large language models

All 22 Words

large language model

/ˌlɑːrdʒ ˈlæŋɡwɪdʒ ˌmɒdəl/An AI trained on vast text data to understand and generate language

“Large language models can write essays, code, and answer complex questions.”

foundation model

/faʊnˈdeɪʃən ˌmɒdəl/A large model trained on broad data that can be adapted to many tasks

“Foundation models serve as the base for numerous downstream applications.”

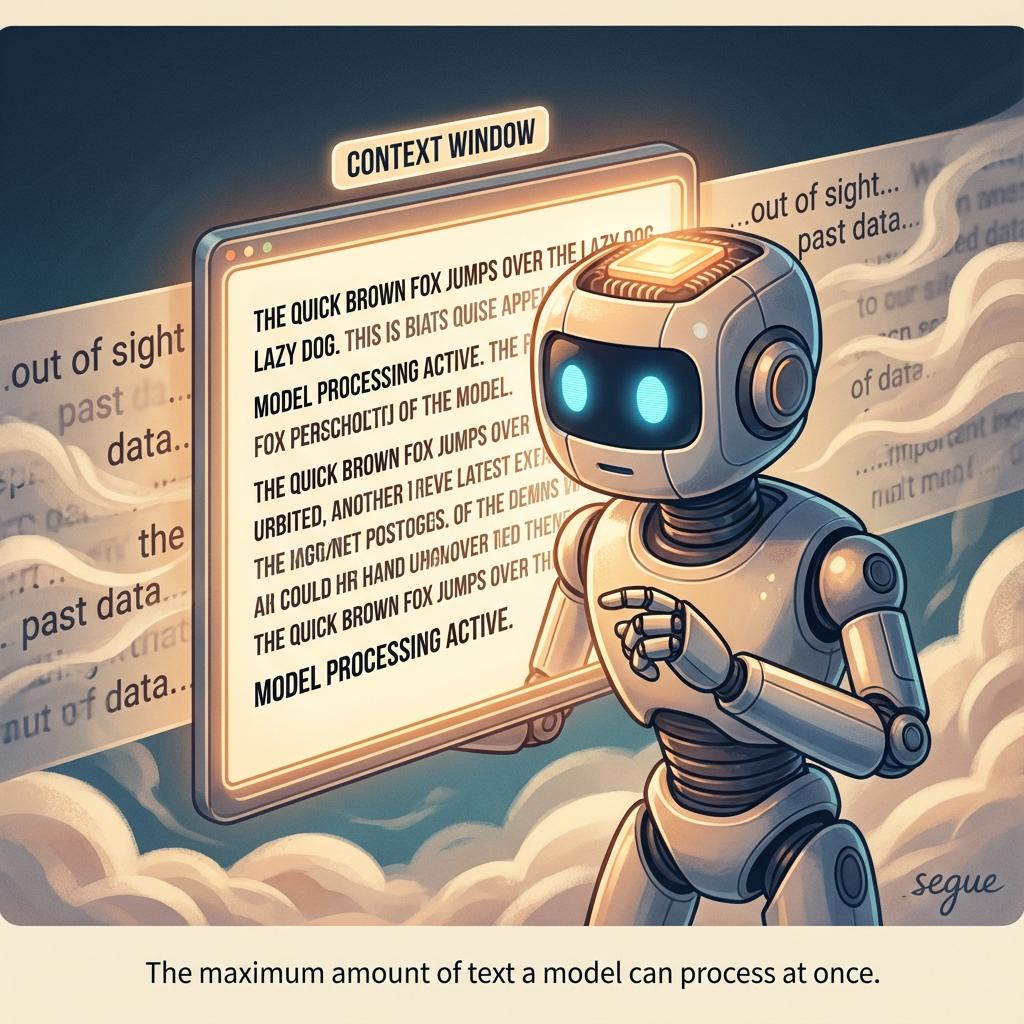

context window

/ˈkɒntekst ˌwɪndoʊ/The amount of text a model can consider at once

“The expanded context window allows processing entire documents.”

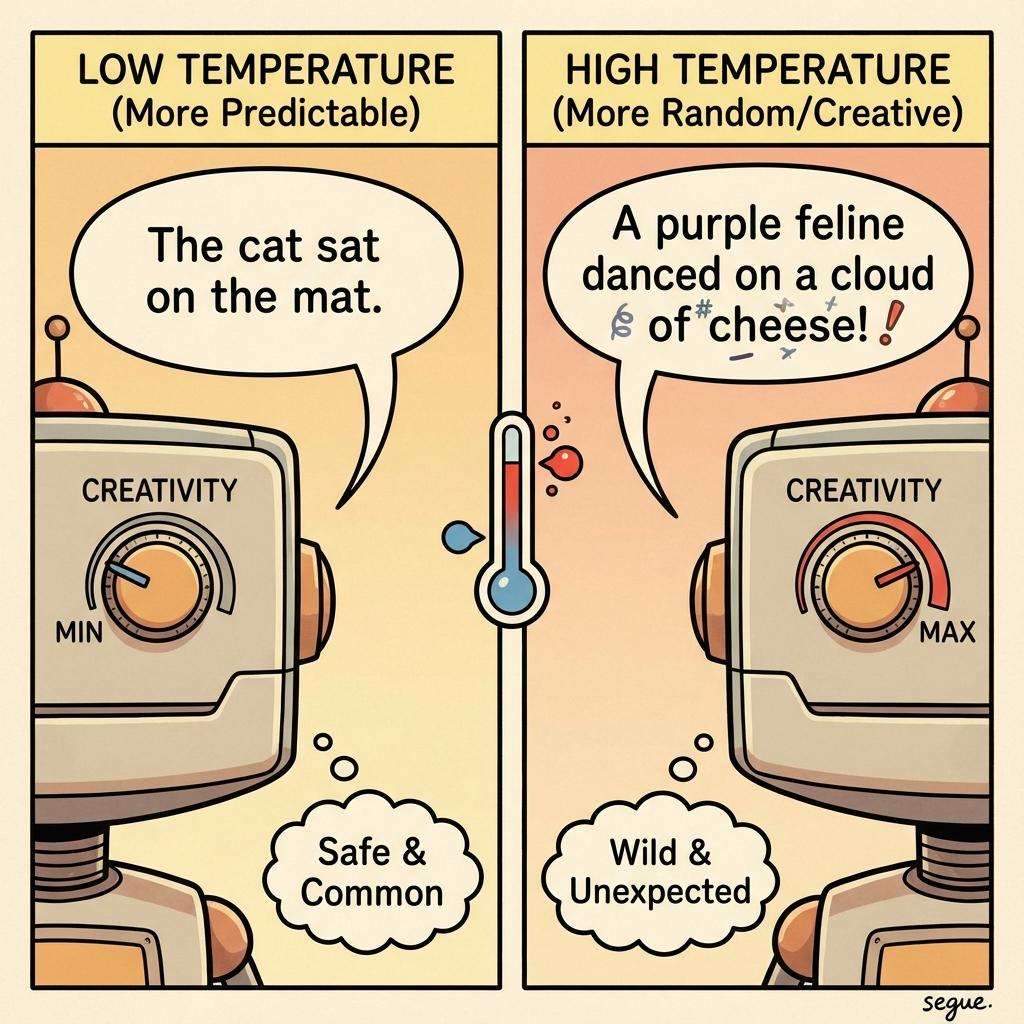

temperature

/ˈtɛmpɝətʃɝ/A parameter controlling randomness in AI outputs

“Lower temperature produces more deterministic and focused responses.”

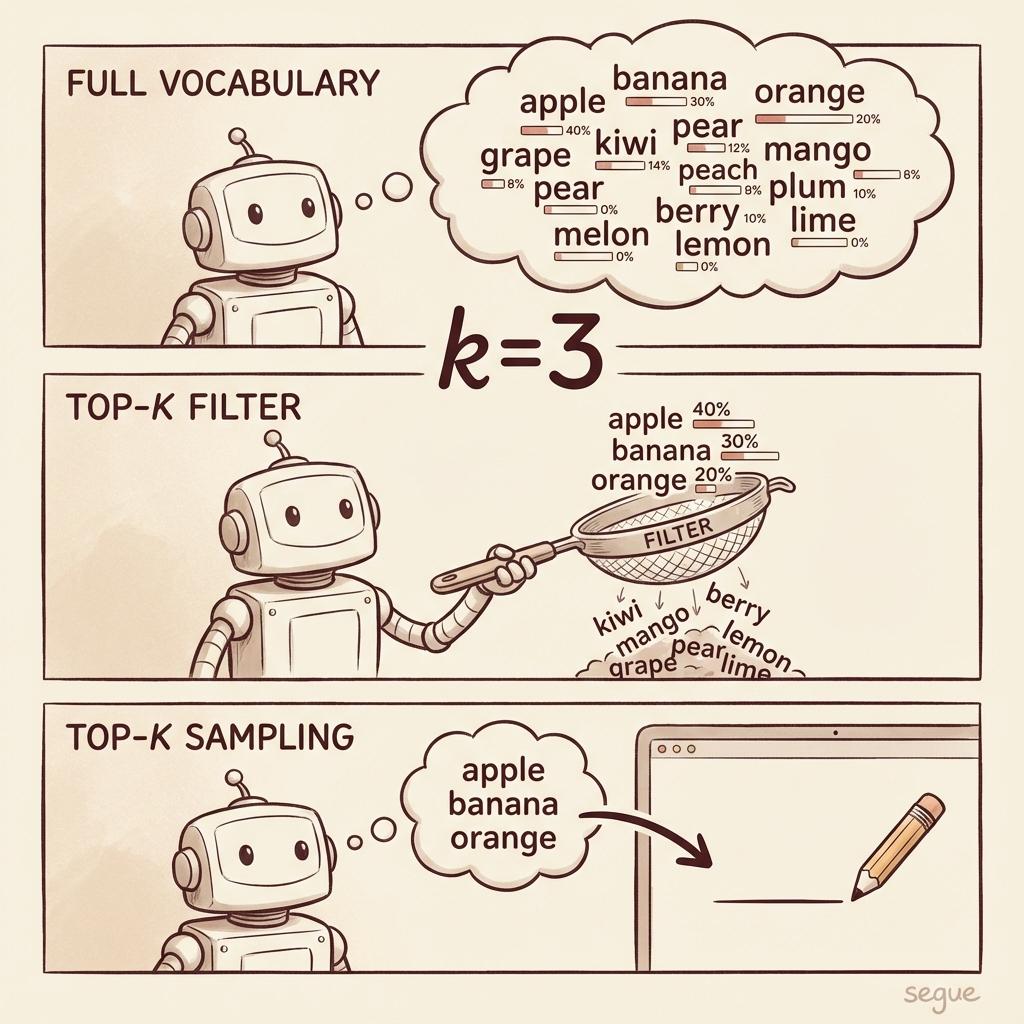

top-k sampling

/ˌtɒp ˈkeɪ ˌsæmplɪŋ/Limiting word choices to the k most likely options

“Top-k sampling prevents the model from choosing unlikely tokens.”

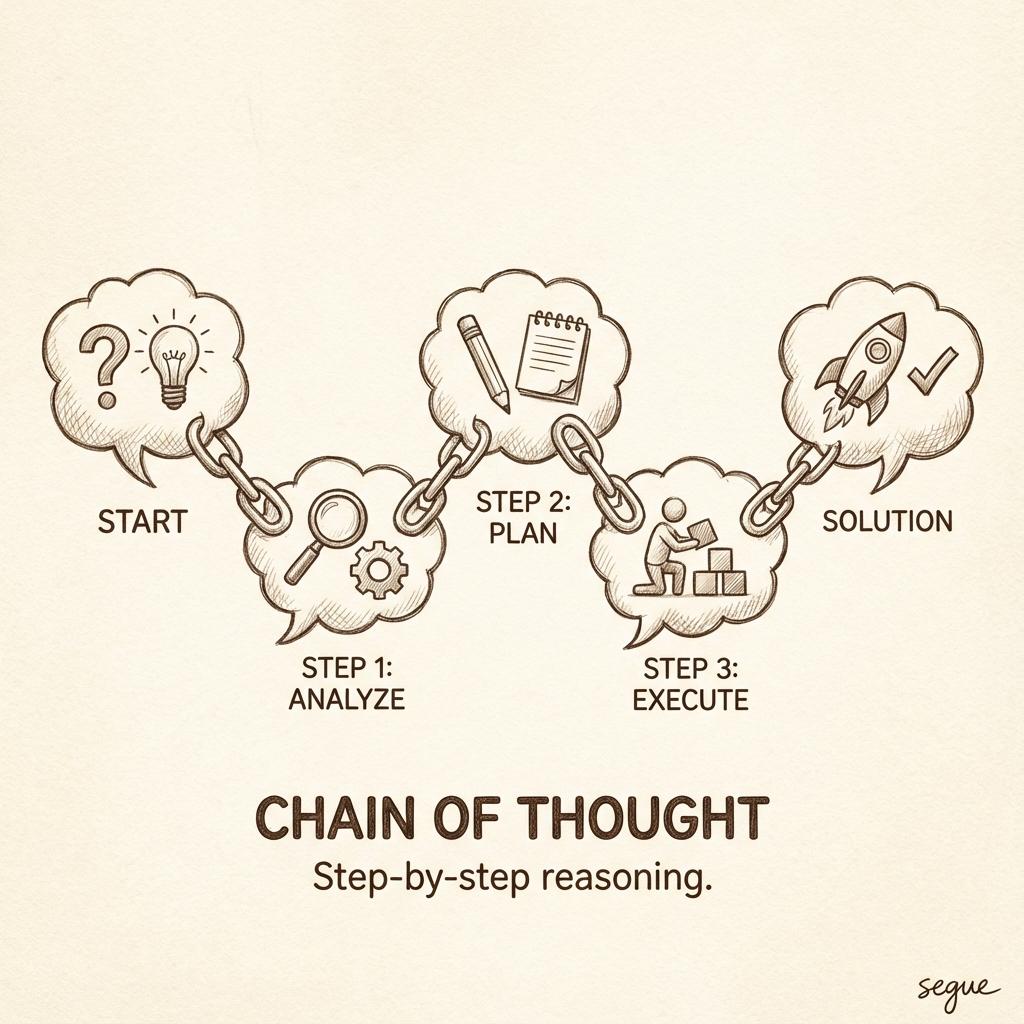

chain of thought

/ˌtʃeɪn əv ˈθɔːt/Prompting technique that encourages step-by-step reasoning

“Chain of thought prompting improved the model's problem-solving accuracy.”

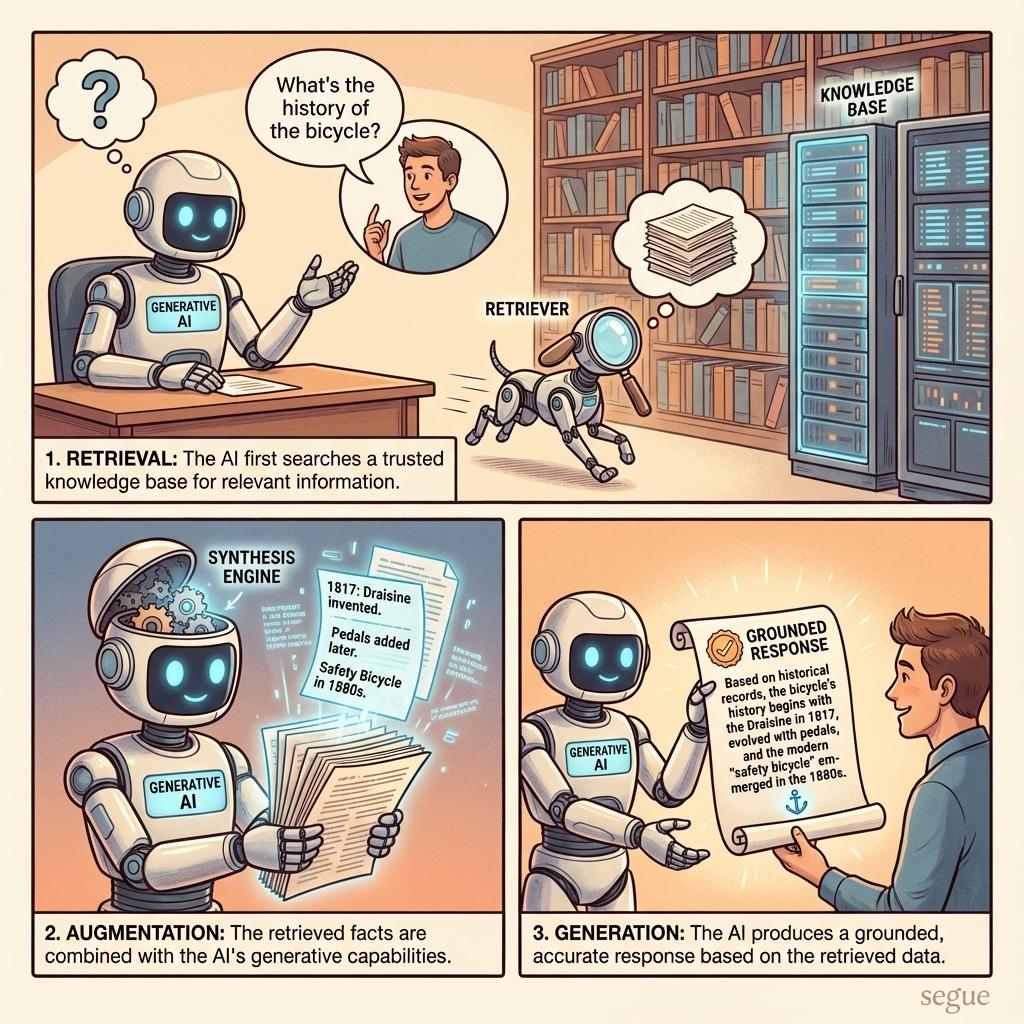

retrieval augmented generation

/rɪˌtriːvəl ˌɔːɡˌmentɪd ˌdʒenəˈreɪʃən/Combining search results with generative AI for grounded responses

“Retrieval augmented generation reduces hallucinations by citing sources.”

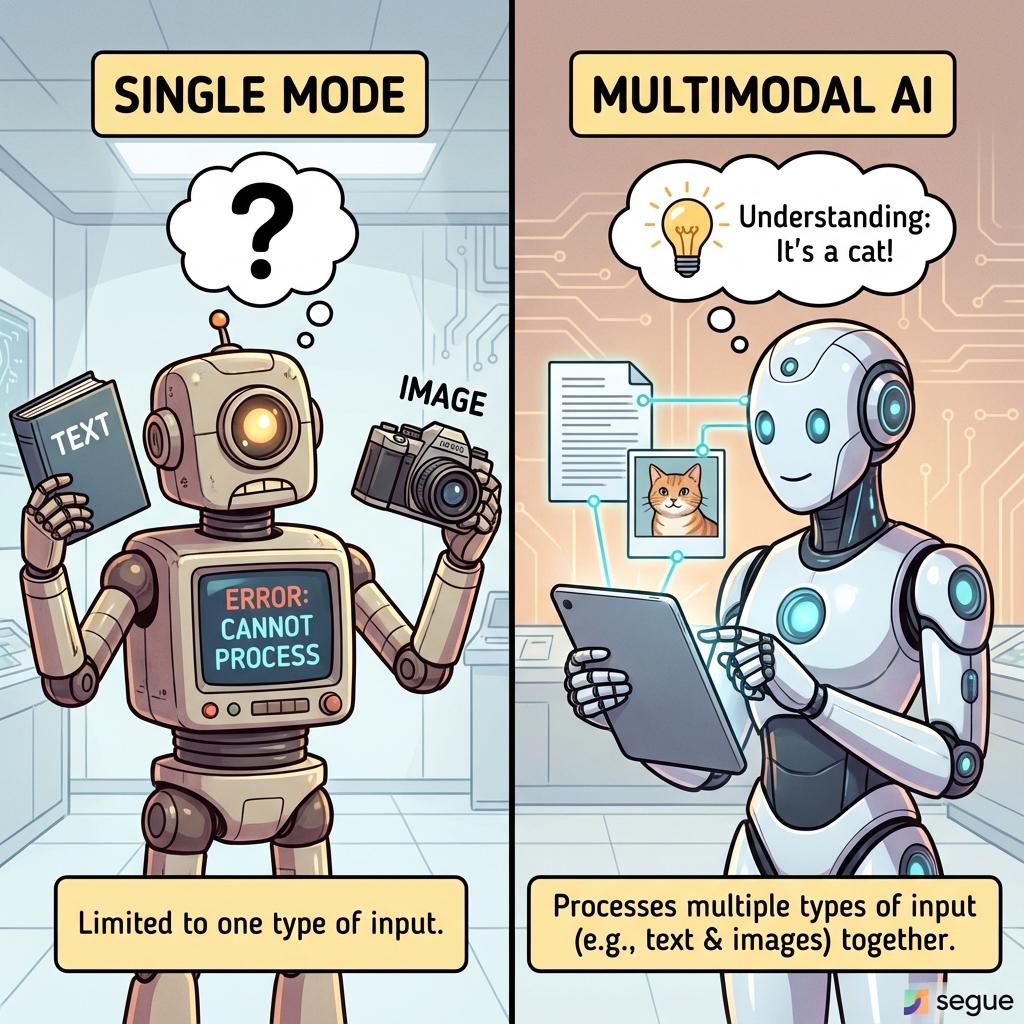

multimodal

/ˌmʌltiˈmoʊdəl/AI capable of processing multiple types of input like text and images

“Multimodal models can describe images and answer questions about them.”

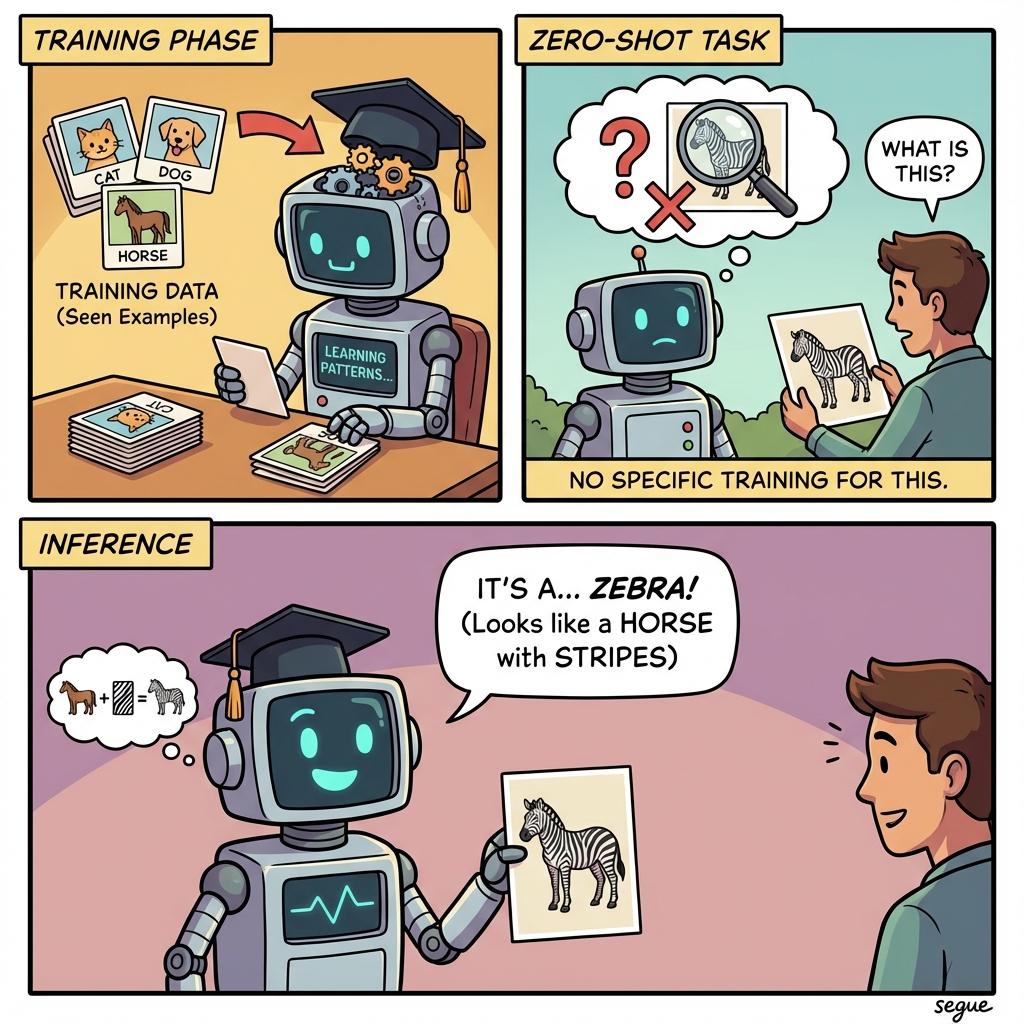

zero-shot learning

/ˈzɪəroʊ ʃɒt ˌlɜːrnɪŋ/Performing tasks without any task-specific training examples

“Zero-shot learning enables the model to classify categories it hasn't seen.”

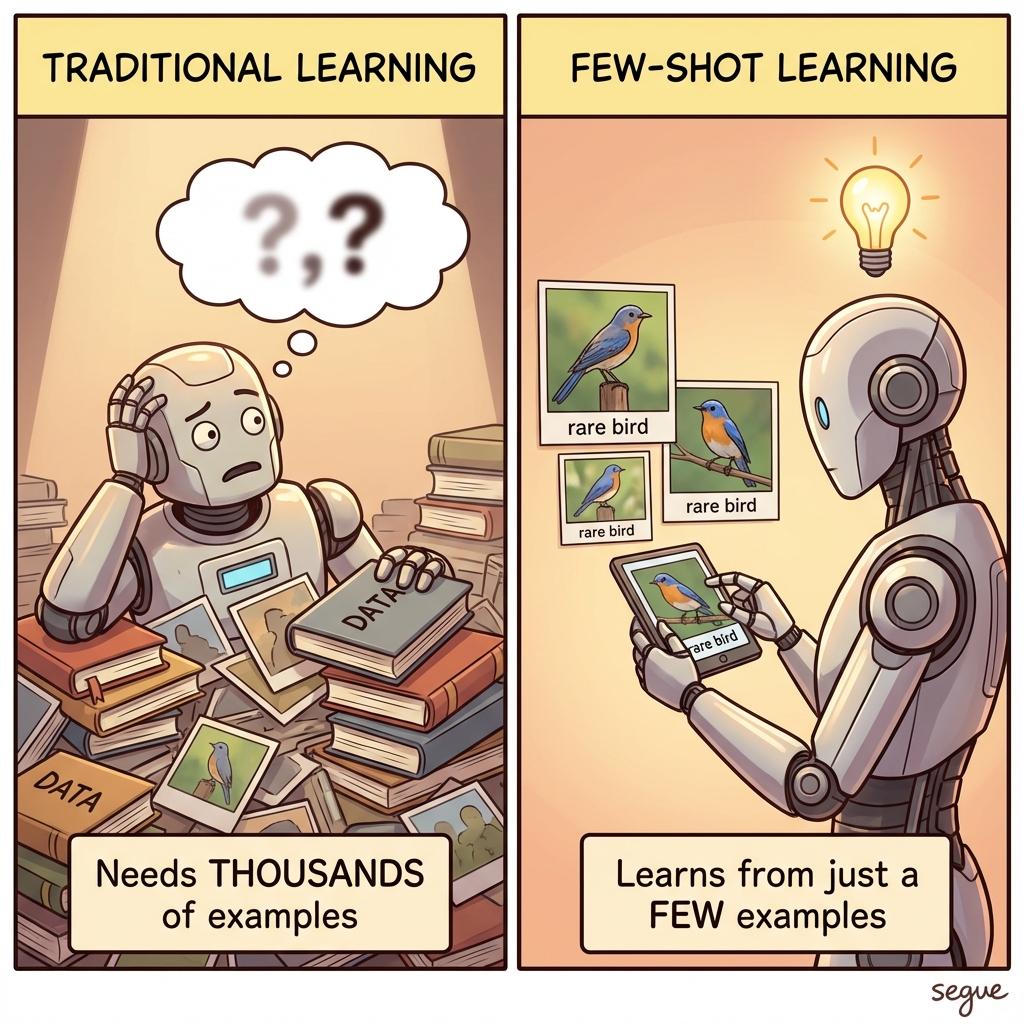

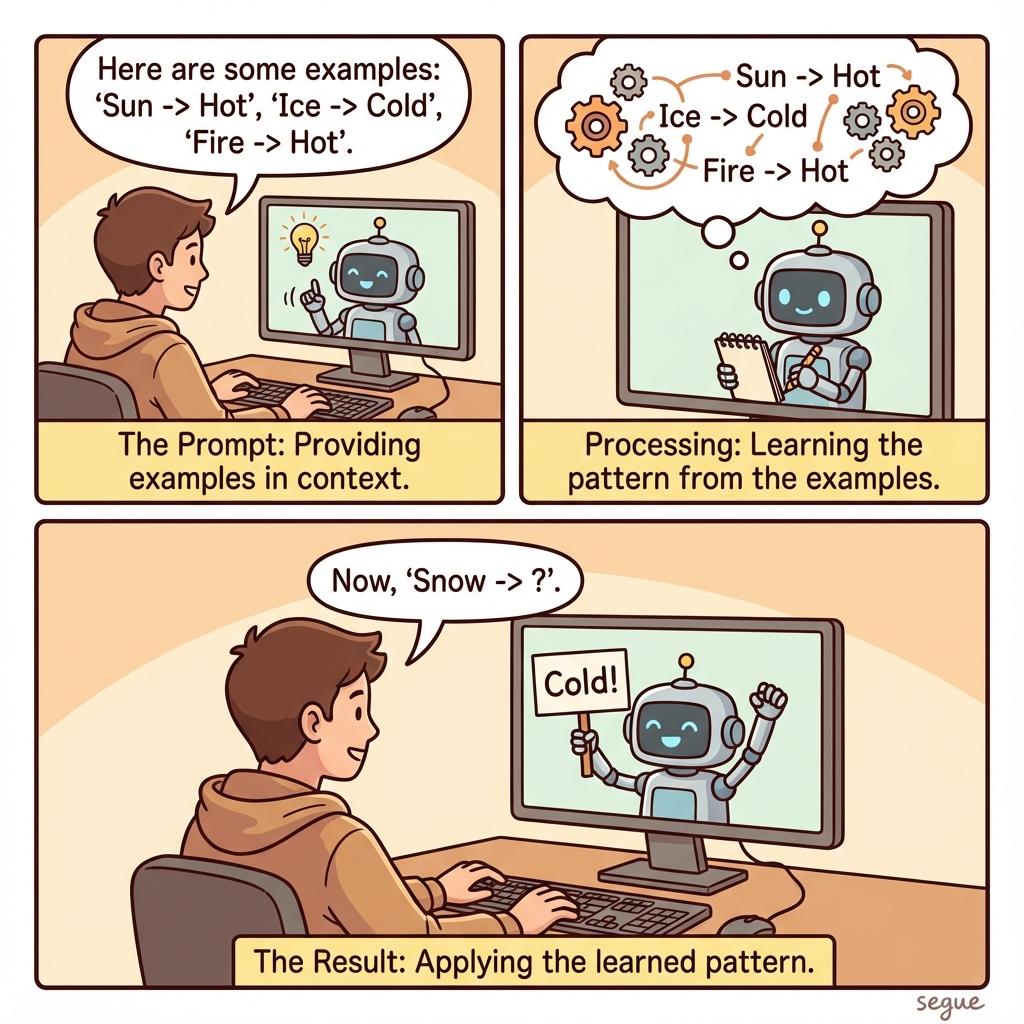

few-shot learning

/ˌfjuː ʃɒt ˈlɜːrnɪŋ/Learning from just a handful of examples

“Few-shot learning adapted the model using only five examples per category.”

in-context learning

/ˌɪn ˈkɒntekst ˌlɜːrnɪŋ/Learning patterns from examples provided in the prompt

“In-context learning allows customization without retraining the model.”

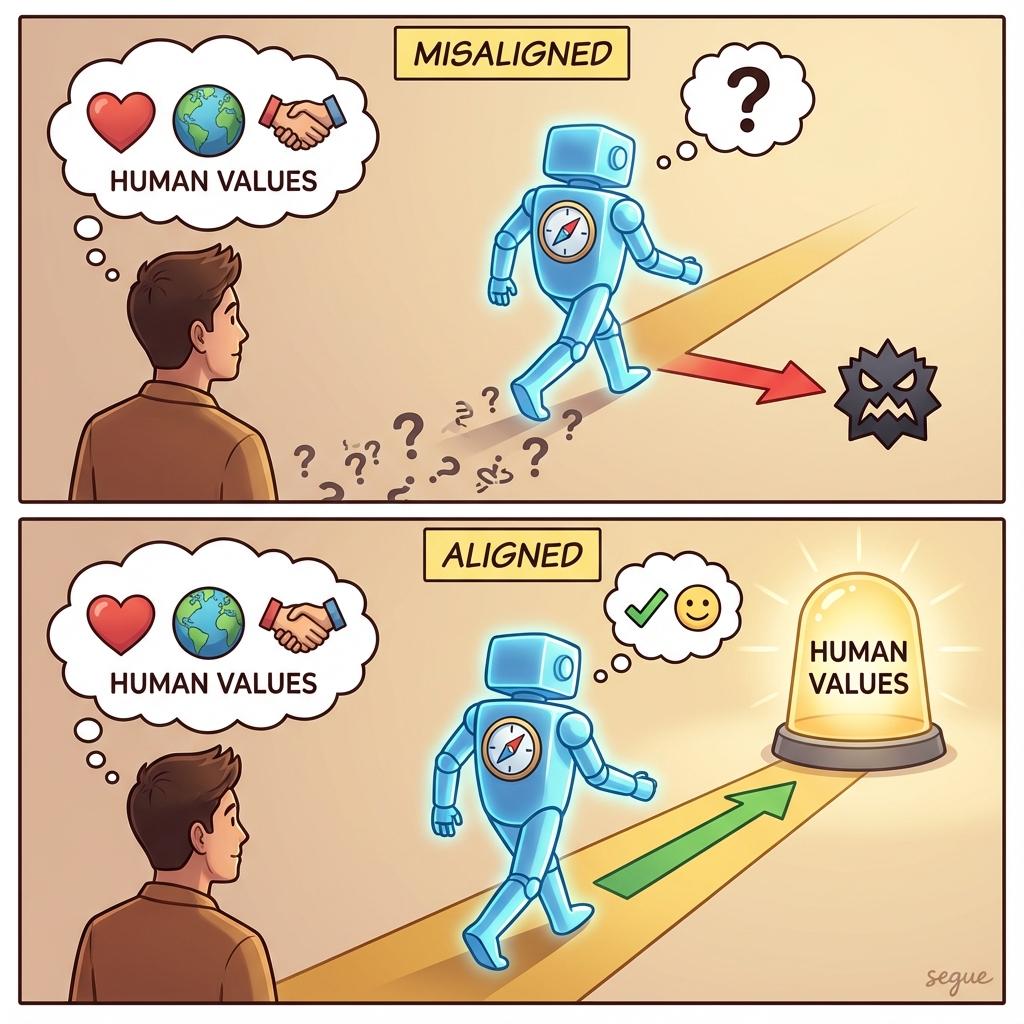

alignment

/əˈɫaɪnmənt/Ensuring AI behavior matches human values and intentions

“Alignment research focuses on making AI systems safe and beneficial.”

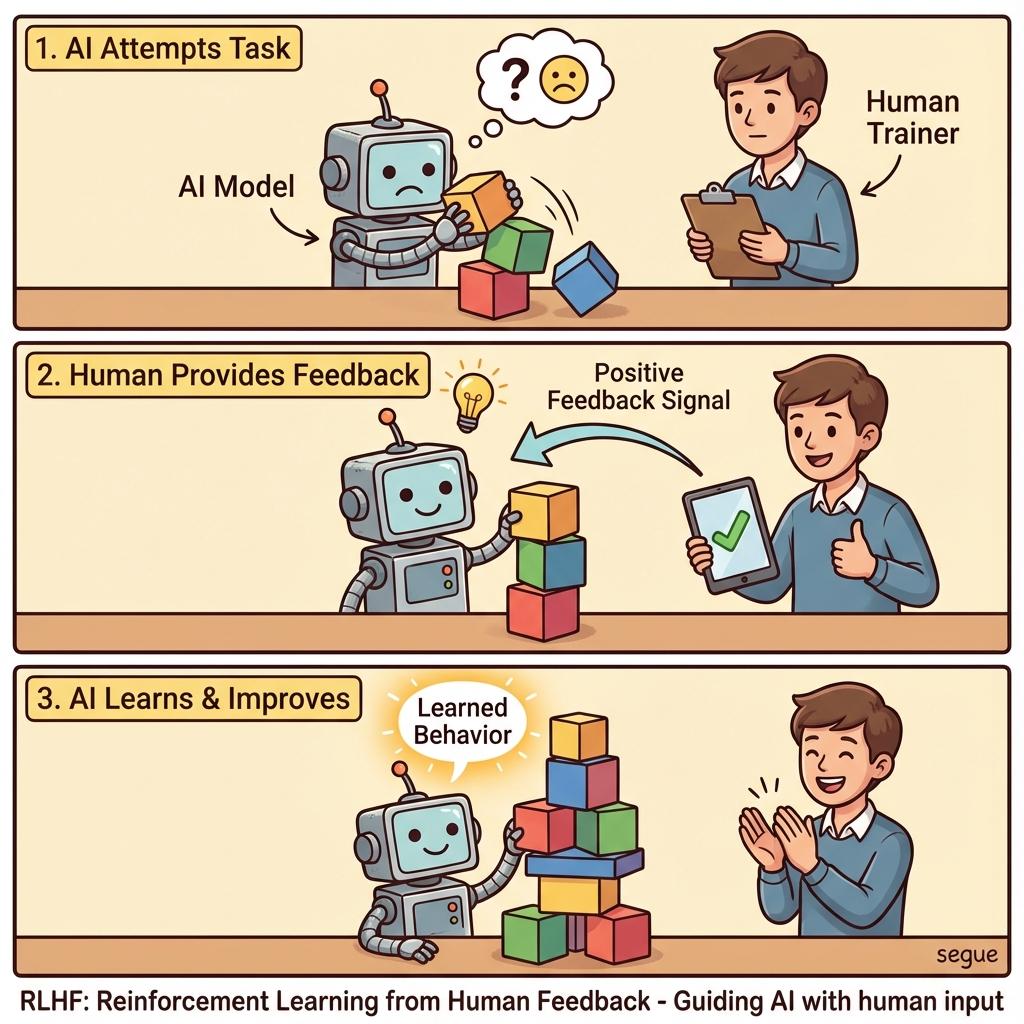

RLHF

/ˌɑːr el eɪtʃ ˈef/Reinforcement Learning from Human Feedback for training AI

“RLHF helped the model produce more helpful and harmless responses.”

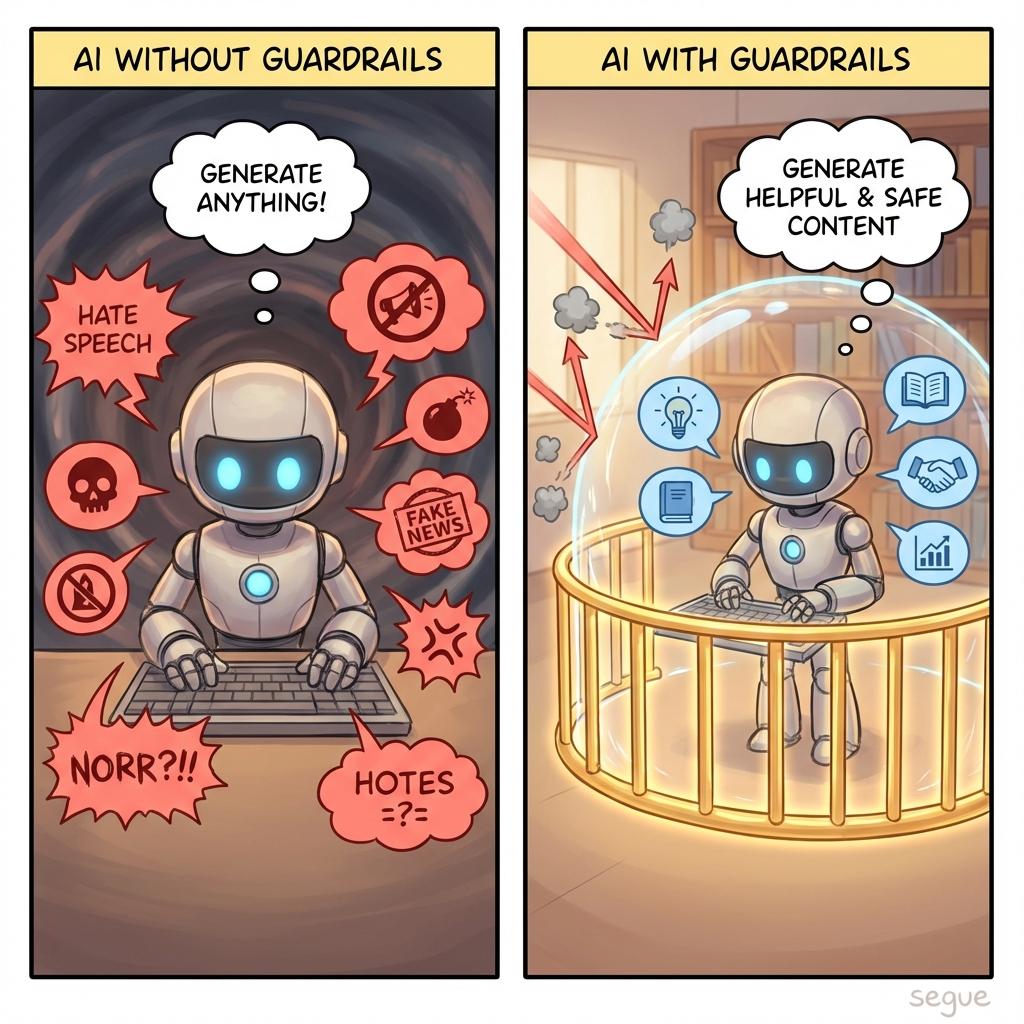

guardrails

/ˈɡɑɹˌdɹeɪɫz/Constraints preventing AI from producing harmful outputs

“Guardrails block the model from generating dangerous content.”

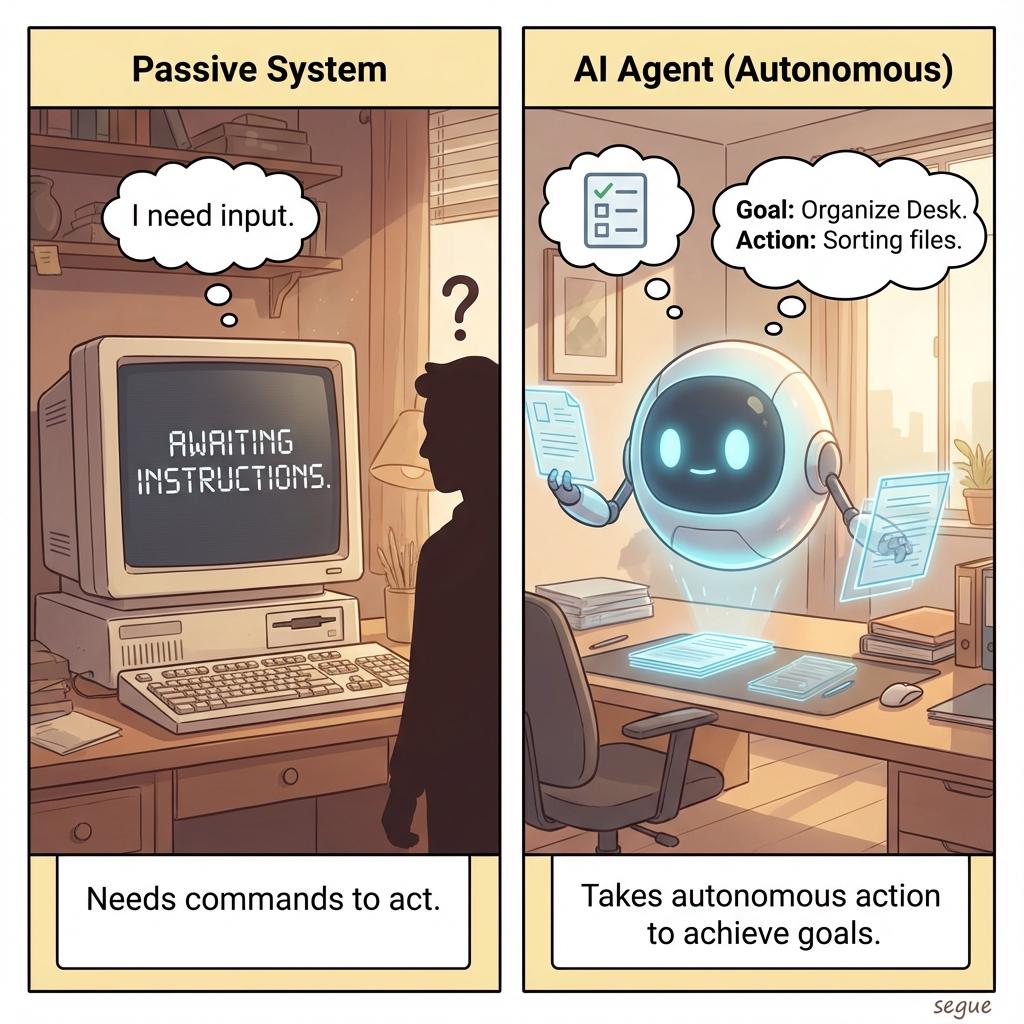

agent

/ˈeɪdʒənt/An AI system that can take actions autonomously to achieve goals

“The AI agent booked flights and hotels to complete the travel planning task.”

tool use

/ˈtuːl ˌjuːs/AI capability to invoke external functions or APIs

“Tool use enables the model to search the web and run calculations.”

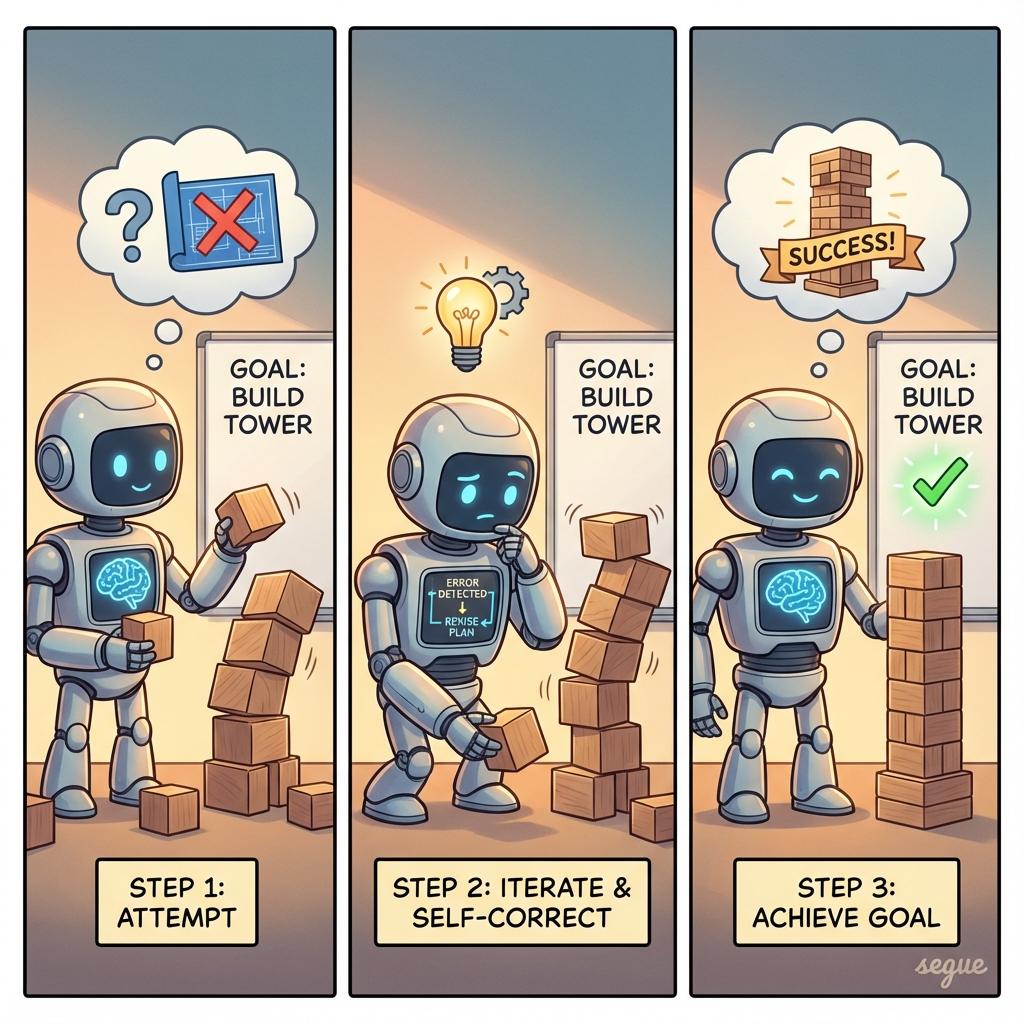

agentic workflow

Multi-step AI processes that iterate and self-correct

“The agentic workflow reviewed and revised its own code until tests passed.”

synthetic data

/sɪnˌθetɪk ˈdeɪtə/Artificially generated data used for training AI models

“Synthetic data augmented our limited real-world examples.”

distillation

/ˌdɪstəˈɫeɪʃən/Training a smaller model to mimic a larger one

“Model distillation created a faster version suitable for mobile devices.”

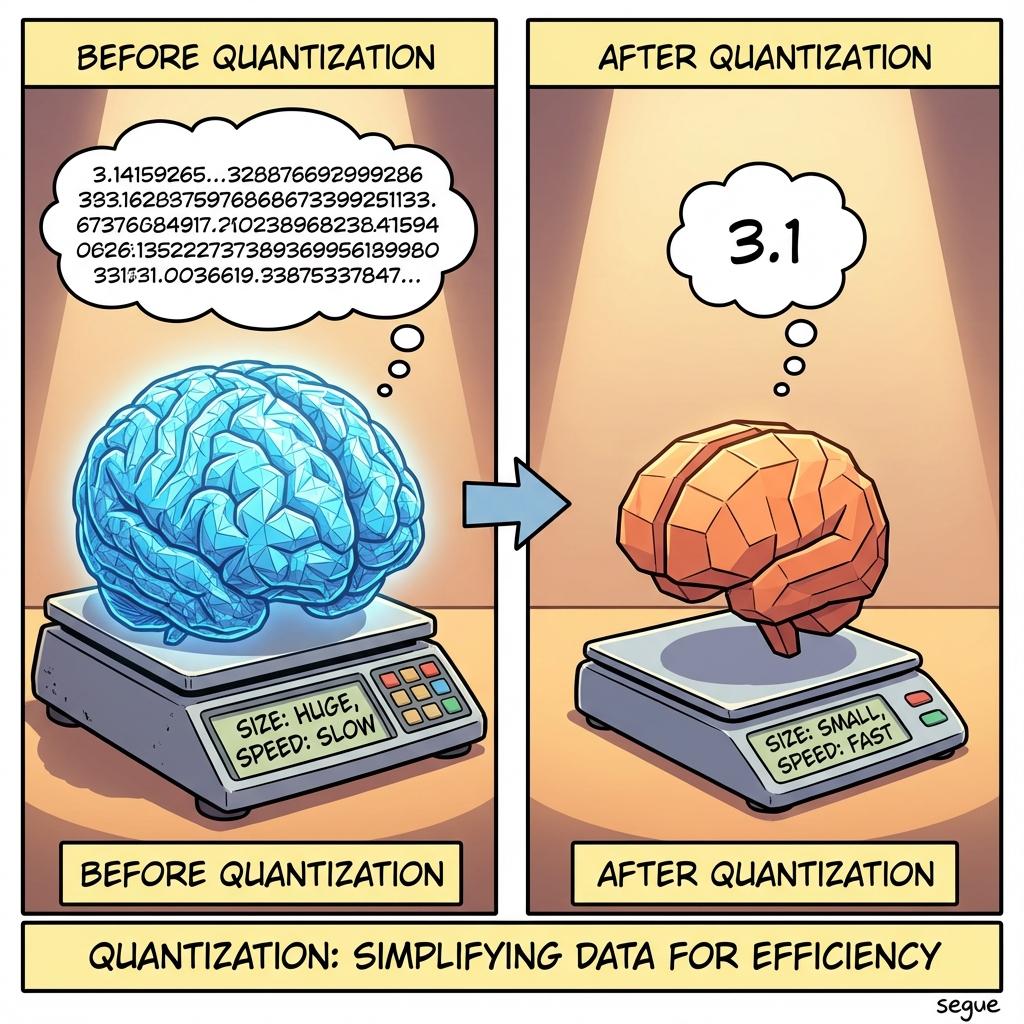

quantization

/ˌkwɒntaɪˈzeɪʃən/Reducing model precision to decrease size and increase speed

“Quantization made the model run efficiently on consumer hardware.”

latent space

/ˈleɪtənt ˌspeɪs/A representation of compressed data

“The model maps images to points in a latent space.”

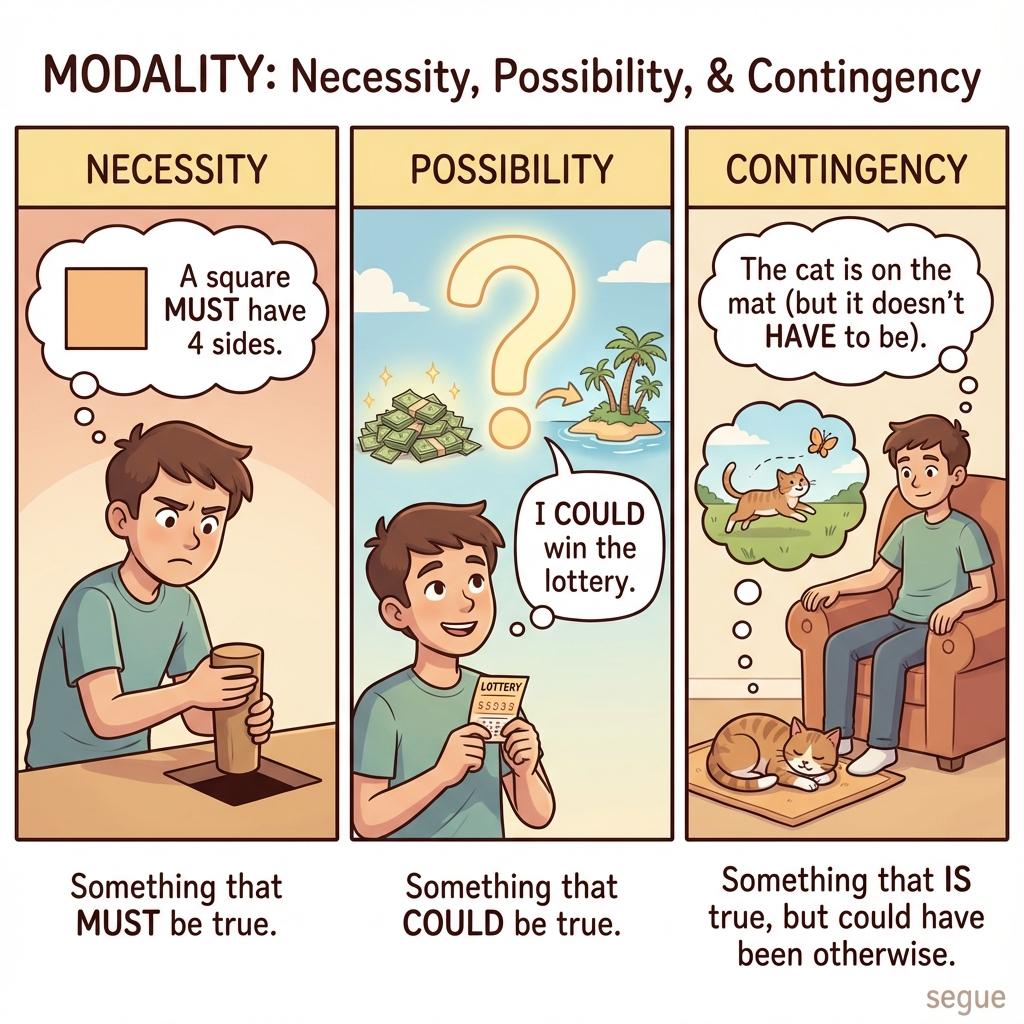

modality

/məˈdæɫəti/A particular mode in which something exists or is experienced or expressed

“The model supports text and image modalities.”

More from Professional & Legal

Explore other vocabulary categories in this collection.