Loading collection...

AI & Machine Learning Vocabulary

Artificial intelligence and machine learning terminology

All 22 Words

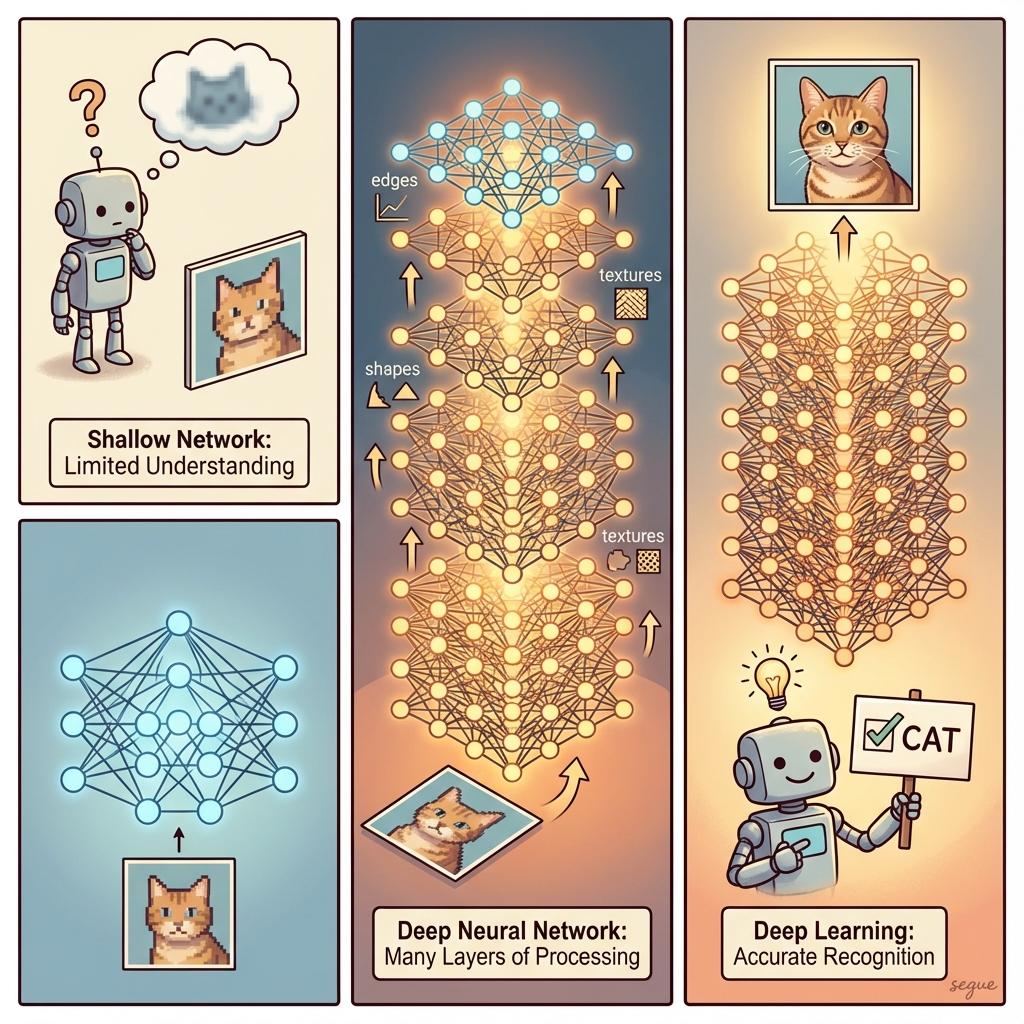

neural network

/ˌnjʊərəl ˈnetwɜːrk/A computing system inspired by biological neural networks

“The neural network learned to recognize faces with remarkable accuracy.”

deep learning

/ˌdiːp ˈlɜːrnɪŋ/Machine learning using neural networks with many layers

“Deep learning revolutionized image recognition and natural language processing.”

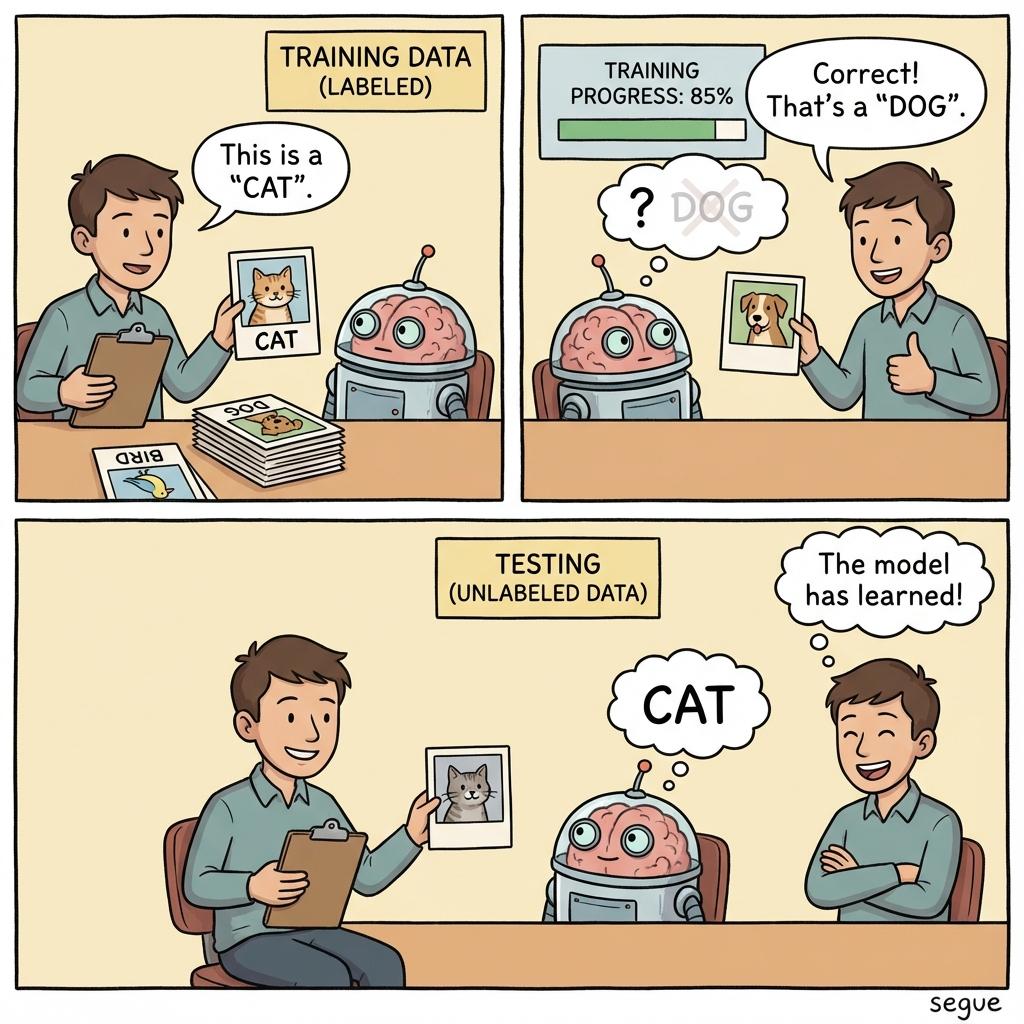

supervised learning

/ˌsuːpərˌvaɪzd ˈlɜːrnɪŋ/Training a model on labeled data with known outputs

“Supervised learning requires a dataset with correct answers for each example.”

unsupervised learning

/ˌʌnˌsuːpərˌvaɪzd ˈlɜːrnɪŋ/Finding patterns in data without labeled examples

“Unsupervised learning discovered customer segments we hadn't considered.”

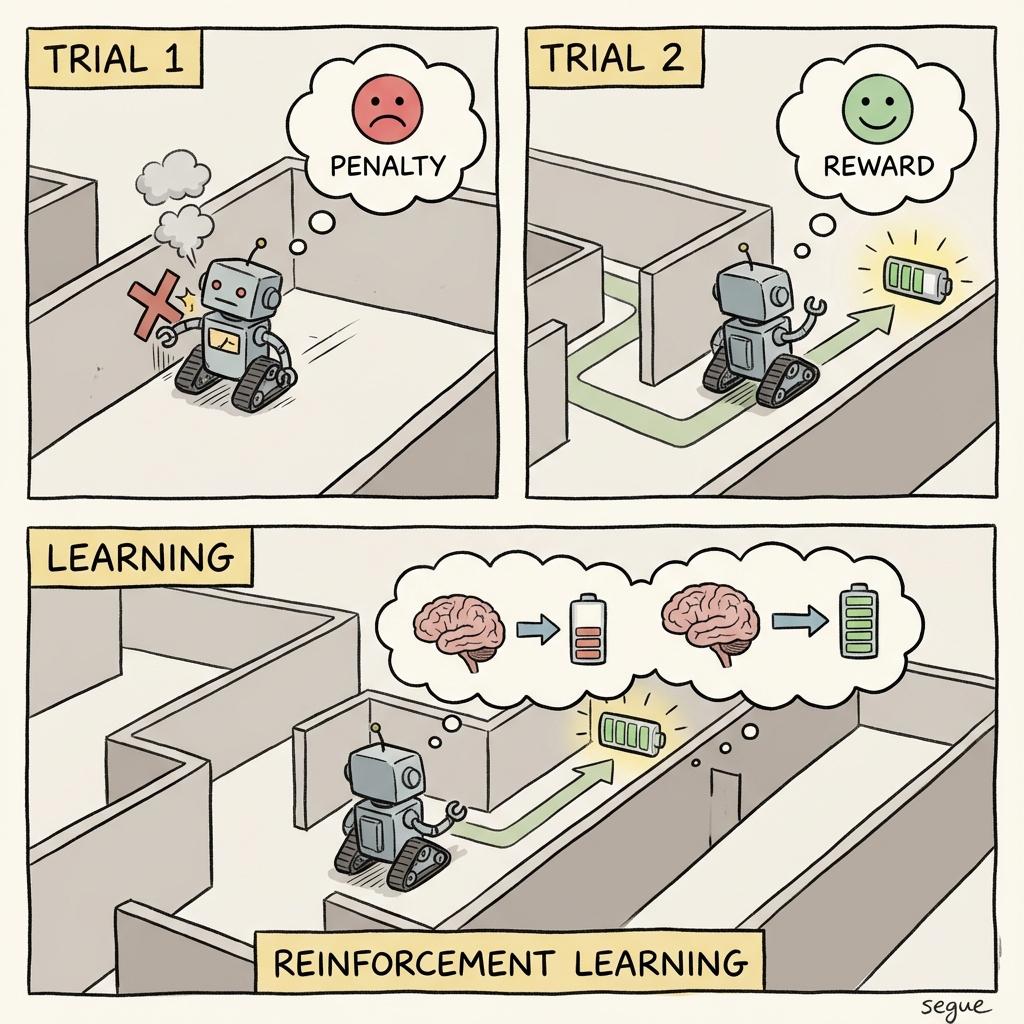

reinforcement learning

/ˌriːɪnˈfɔːrsmənt ˌlɜːrnɪŋ/Learning through trial and error with rewards and penalties

“Reinforcement learning enabled the AI to master complex games.”

transformer

/tɹænsˈfɔɹmɝ/A neural network architecture using self-attention mechanisms

“Transformer models like GPT have transformed natural language processing.”

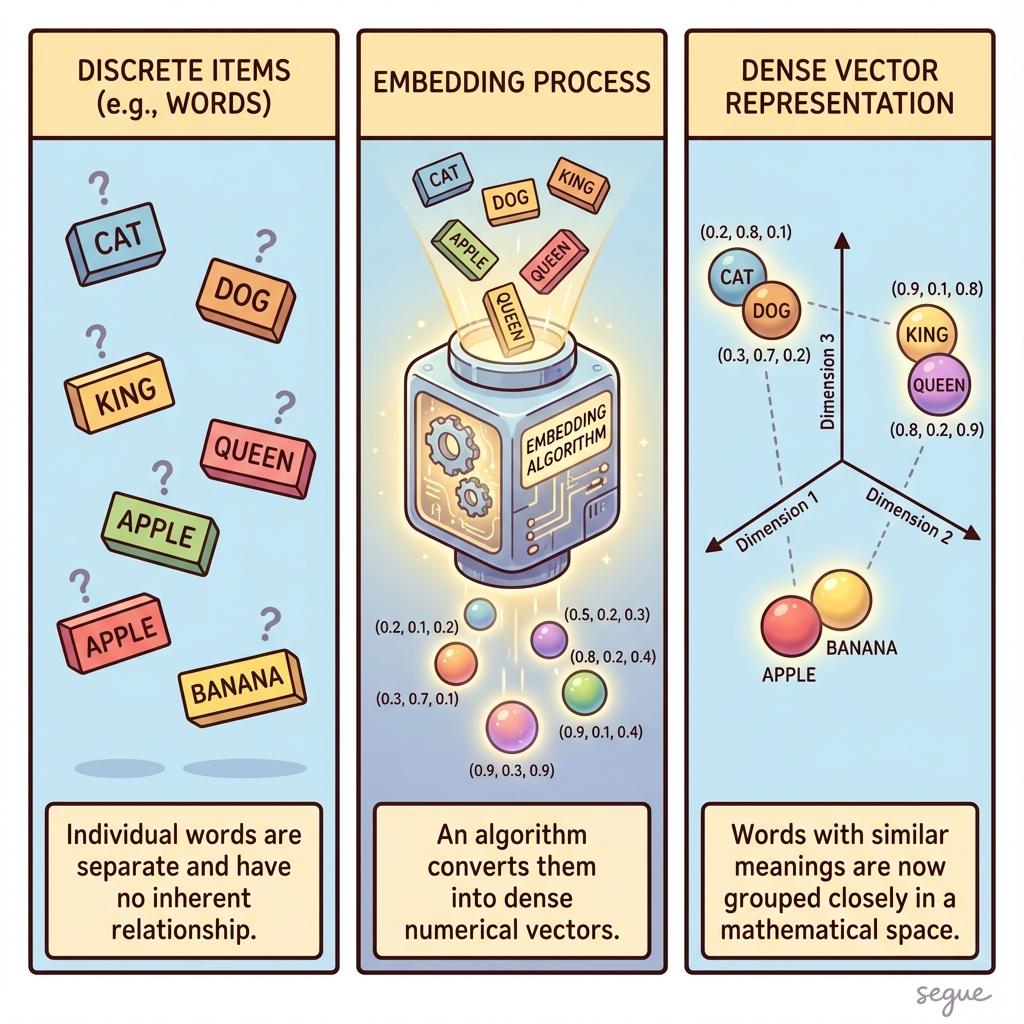

embedding

/ɛmˈbɛdɪŋ/A dense vector representation of data in continuous space

“Word embeddings capture semantic relationships between terms.”

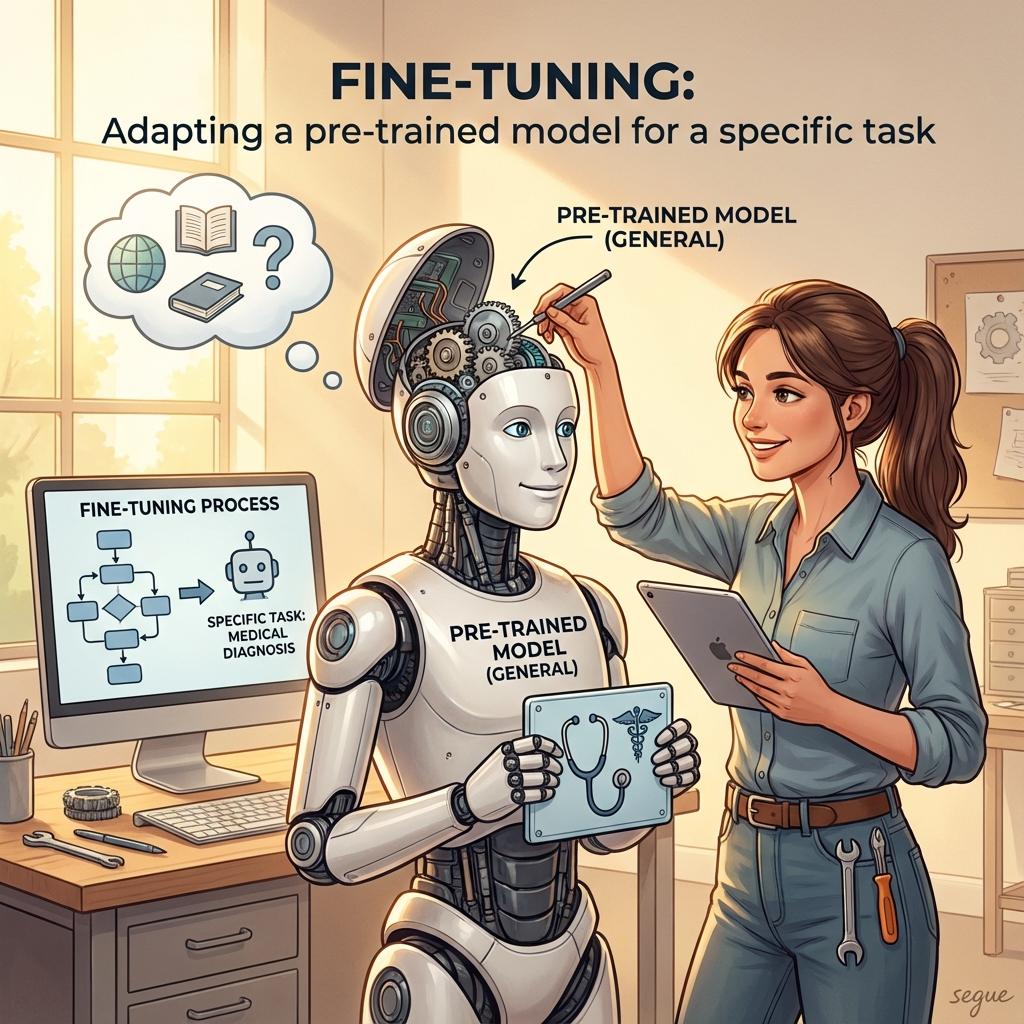

fine-tuning

/ˈfaɪn ˌtuːnɪŋ/Adapting a pre-trained model for a specific task

“Fine-tuning the base model on our data improved accuracy significantly.”

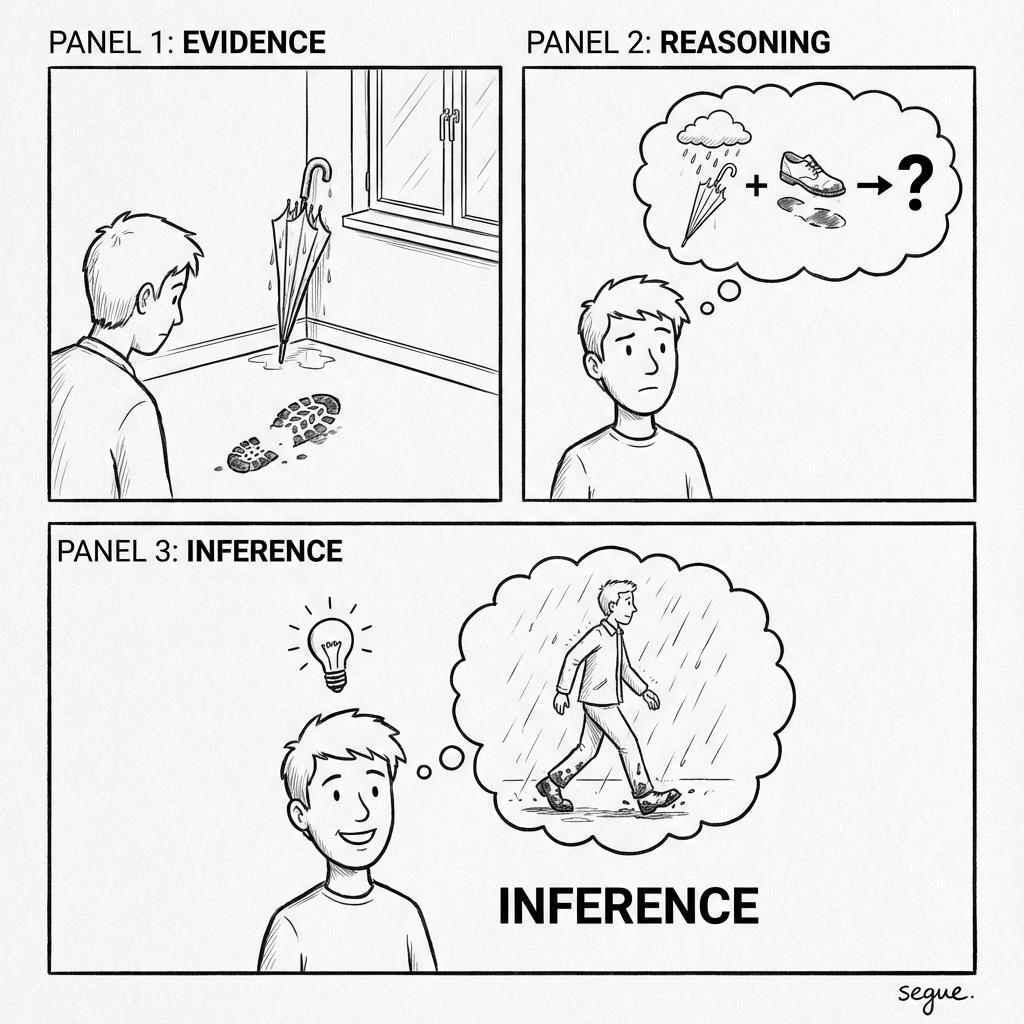

inference

/ˈɪnfɝəns/Using a trained model to make predictions on new data

“Inference latency must be low for real-time applications.”

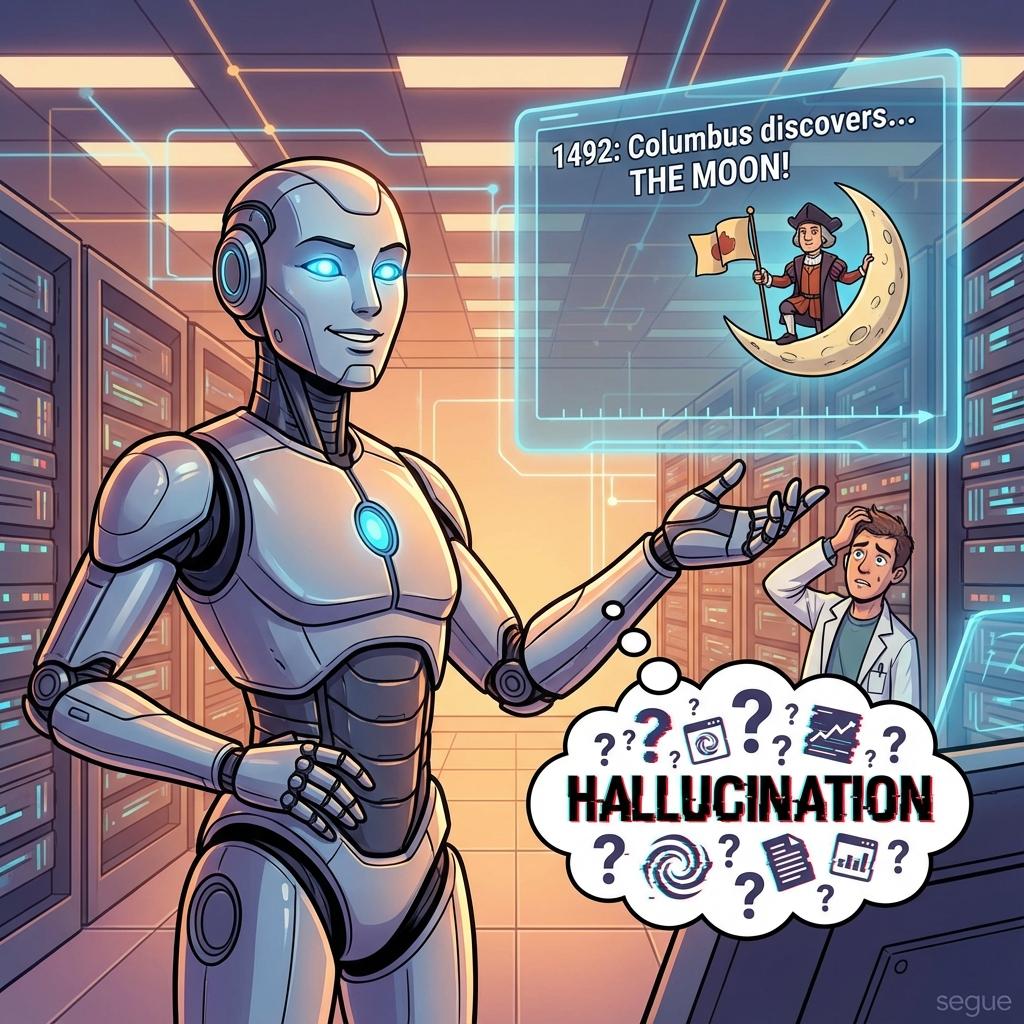

hallucination

/həˌɫusəˈneɪʃən/When an AI generates false or fabricated information

“The model's hallucination produced a convincing but entirely fictional citation.”

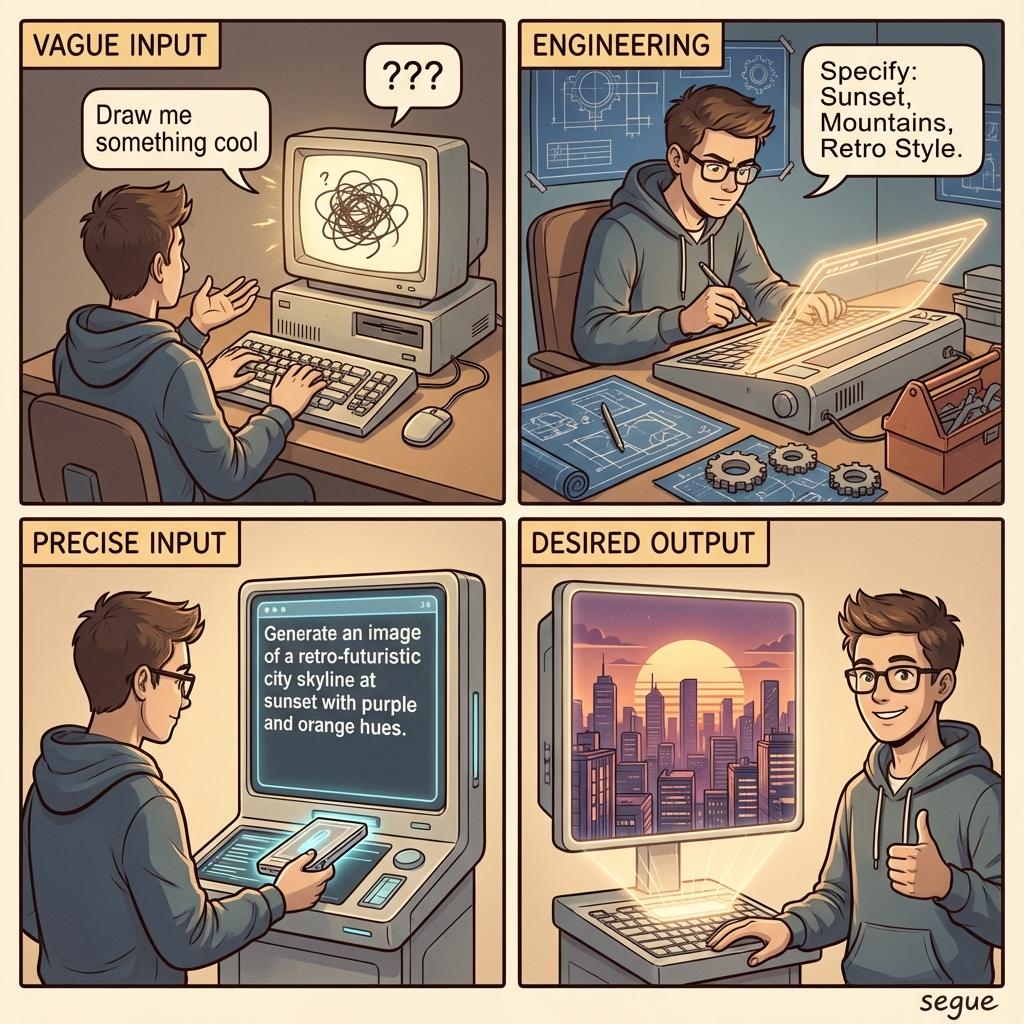

prompt engineering

/ˈprɒmpt ˌendʒɪˈnɪərɪŋ/Crafting inputs to elicit desired outputs from AI models

“Effective prompt engineering dramatically improved the response quality.”

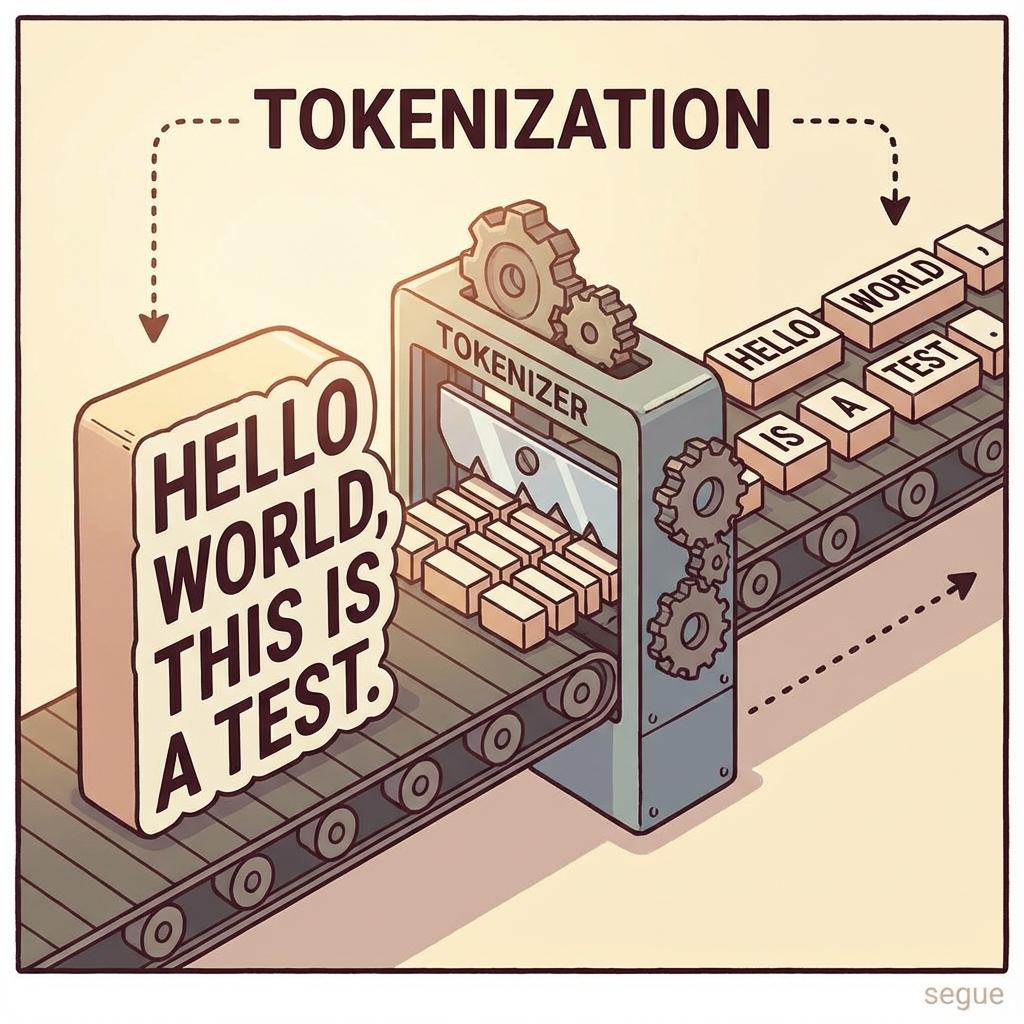

tokenization

/ˌtoʊkənaɪˈzeɪʃən/Breaking text into smaller units for processing

“Tokenization splits sentences into words or subword pieces.”

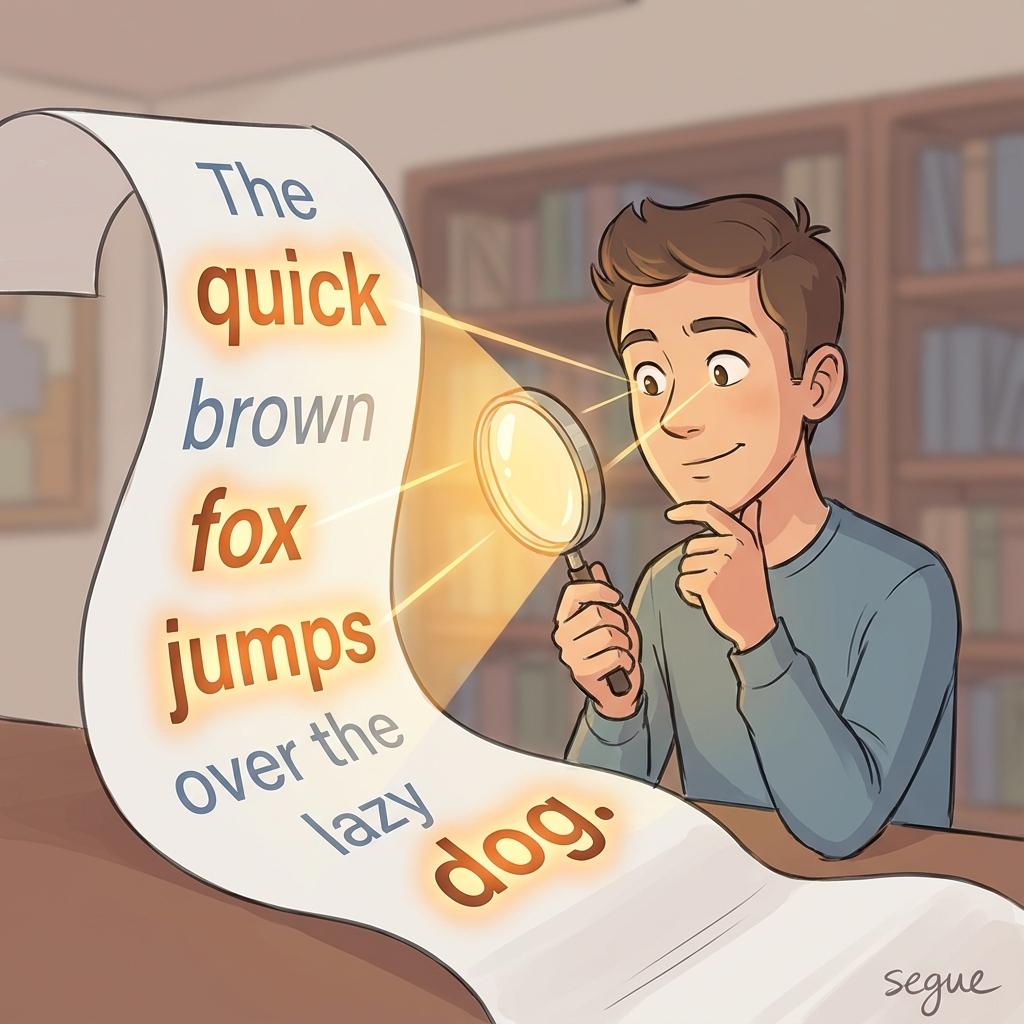

attention mechanism

/əˈtenʃən ˌmekənɪzəm/A technique allowing models to focus on relevant parts of input

“The attention mechanism helps the model understand context across long sequences.”

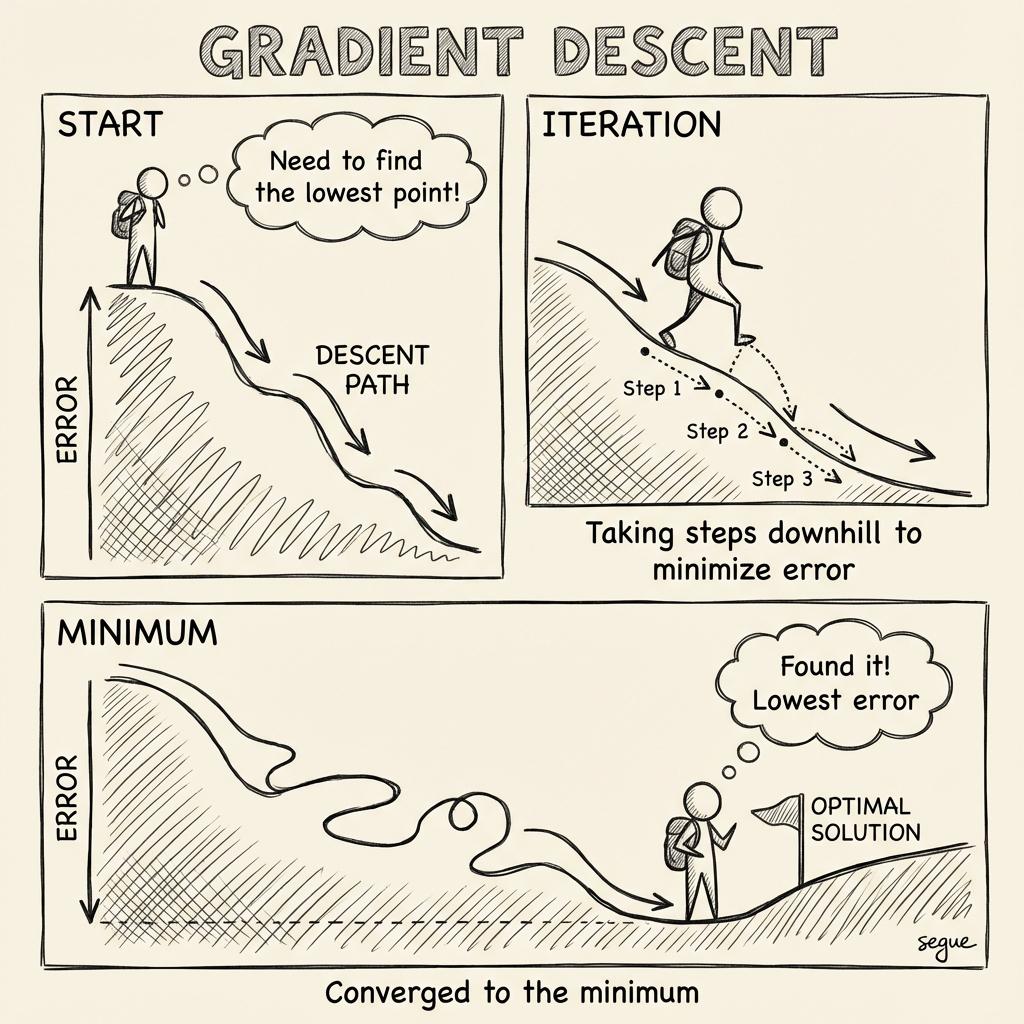

gradient descent

/ˌɡreɪdiənt dɪˈsent/An optimization algorithm that minimizes error iteratively

“Gradient descent adjusts weights to reduce the loss function.”

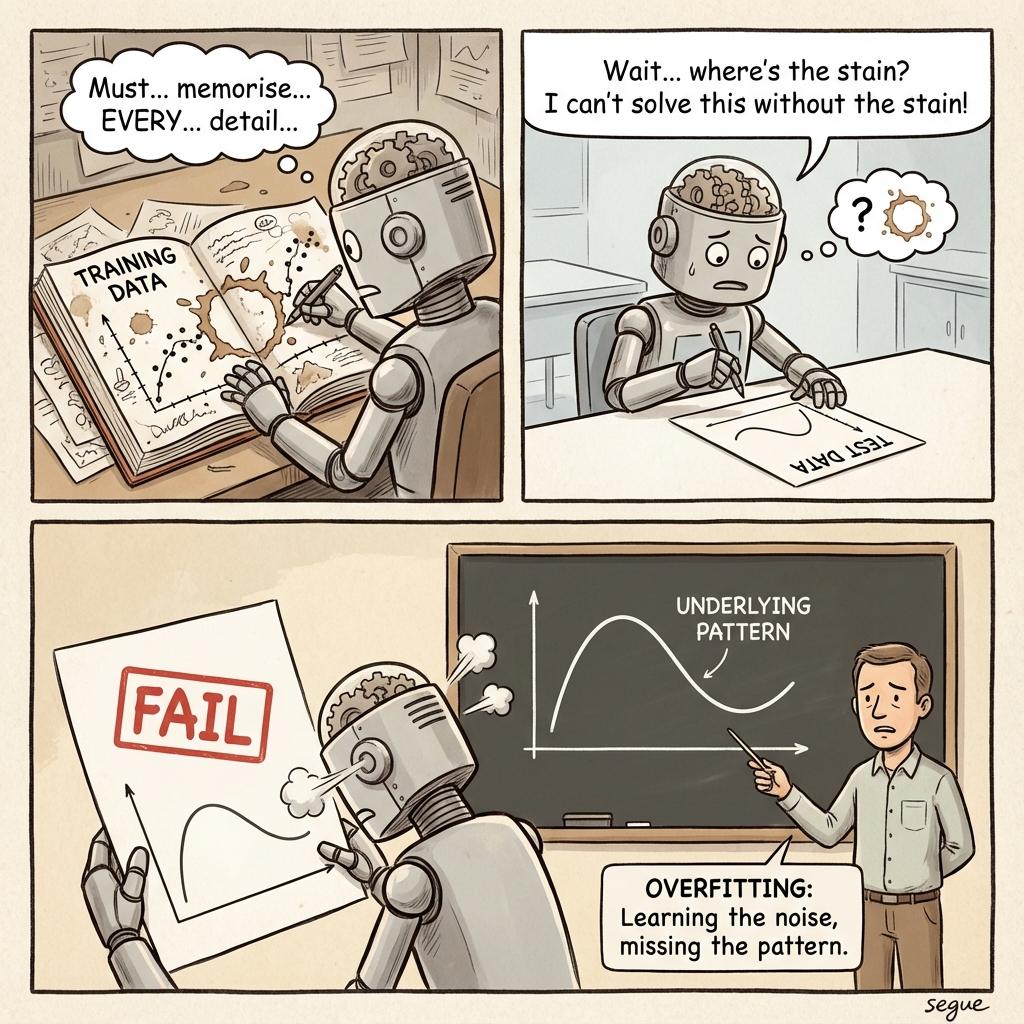

overfitting

/ˈoʊvərˌfɪtɪŋ/When a model learns noise instead of the underlying pattern

“Overfitting caused the model to perform poorly on new data.”

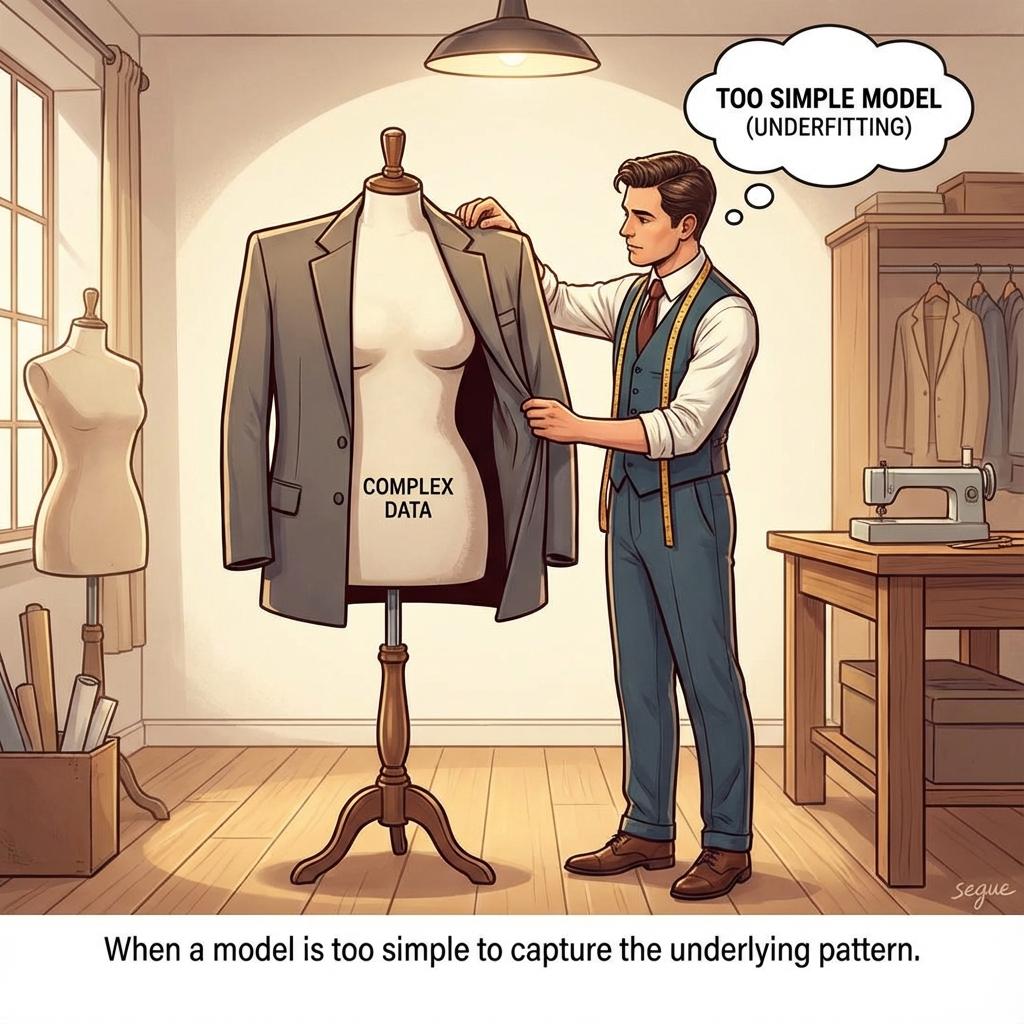

underfitting

/ˈʌndərˌfɪtɪŋ/When a model is too simple to capture the underlying pattern

“Underfitting resulted in poor performance on both training and test data.”

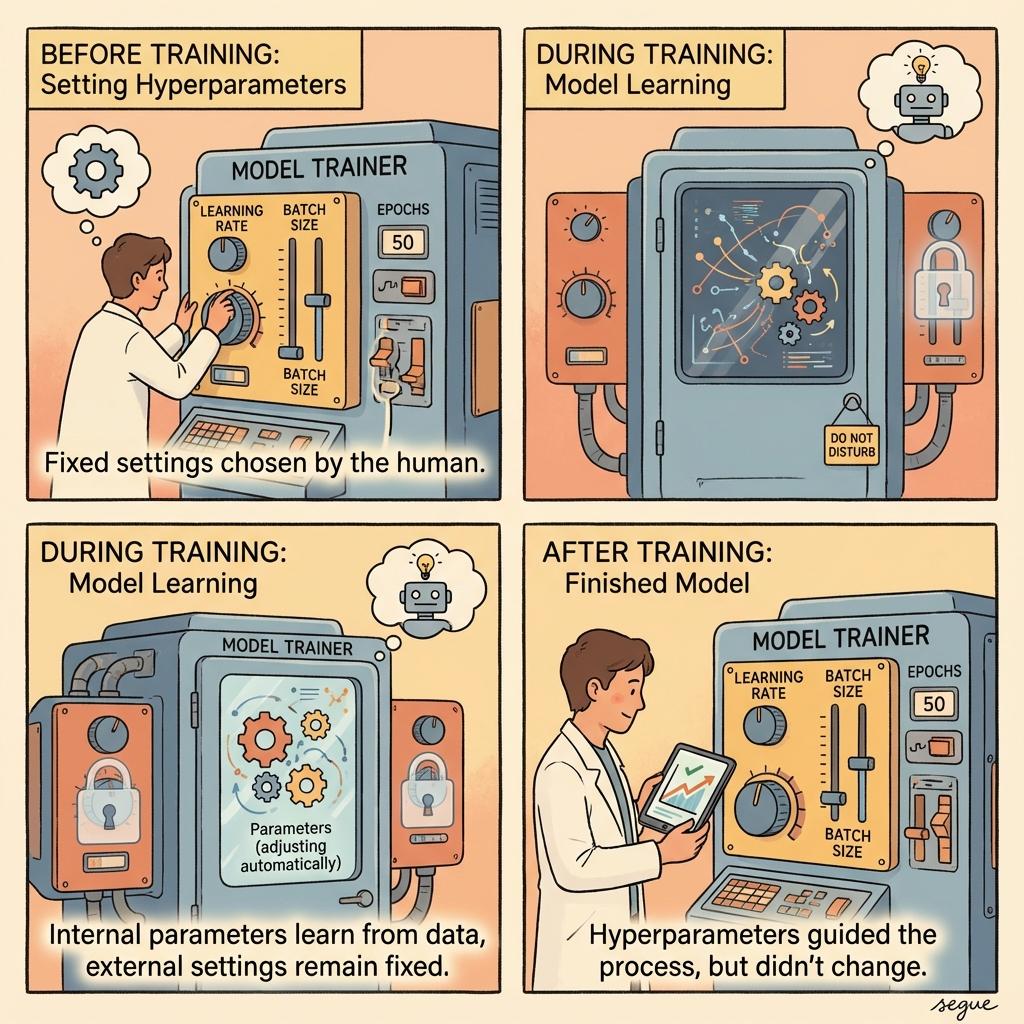

hyperparameter

/ˌhaɪpərˈpærəmiːtər/A parameter set before training begins, not learned from data

“Tuning hyperparameters like learning rate improved model performance.”

epoch

/ˈɛpək/One complete pass through the entire training dataset

“The model converged after fifty epochs of training.”

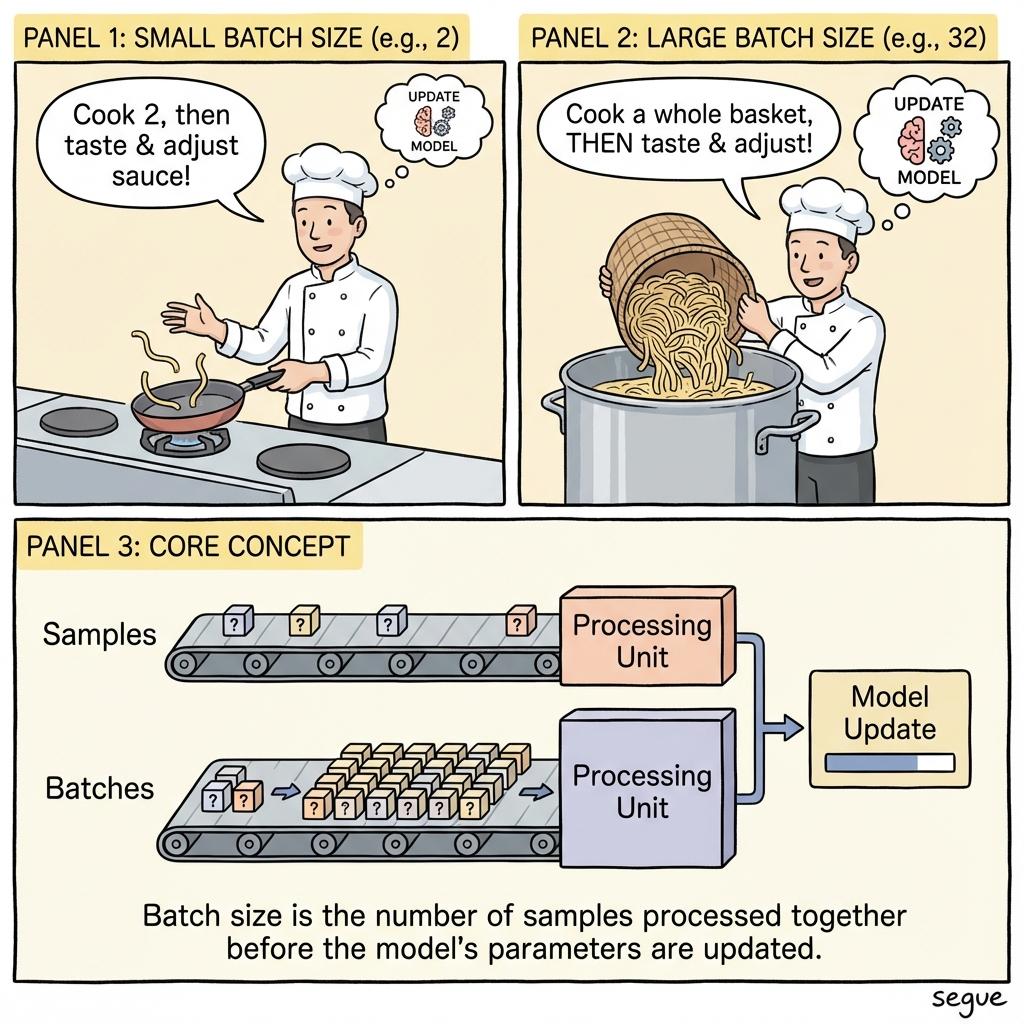

batch size

/ˈbætʃ ˌsaɪz/The number of samples processed before updating the model

“Increasing batch size improved training stability but required more memory.”

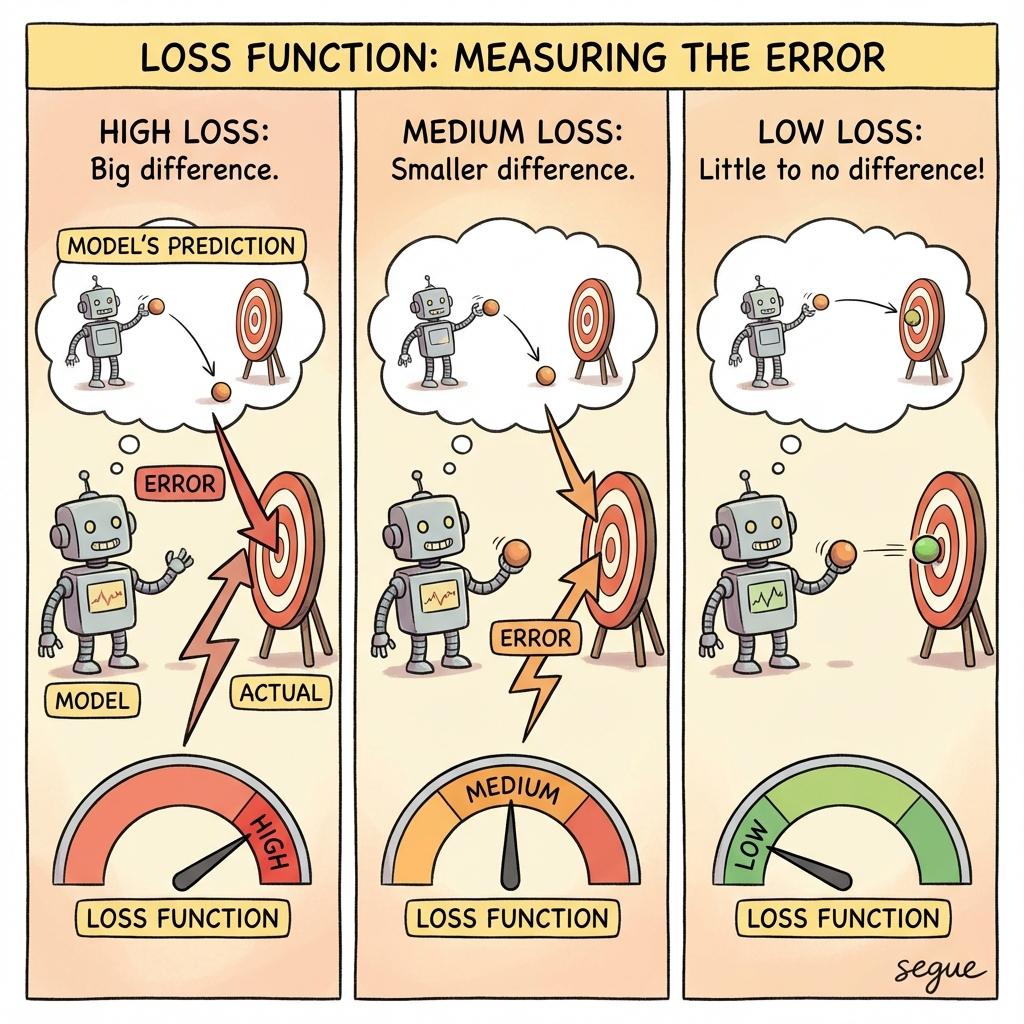

loss function

/ˈlɒs ˌfʌŋkʃən/A measure of how wrong the model's predictions are

“The loss function quantifies the difference between predictions and actual values.”

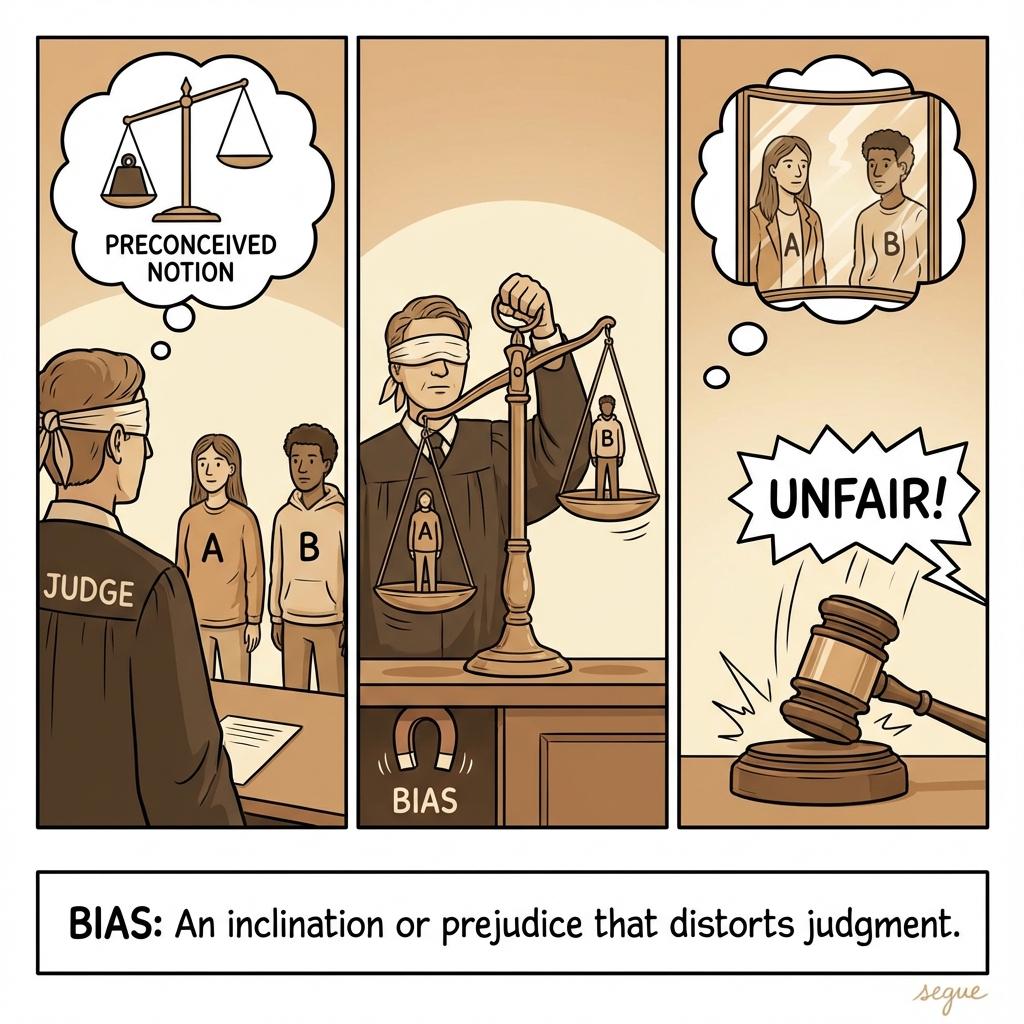

bias

/ˈbaɪəs/Prejudice in favor of or against one thing, person, or group

“We must ensure the AI model is free from bias.”

algorithm

/ˈæɫɡɝˌɪðəm/A process or set of rules to be followed in calculations

“The search algorithm ranks results by relevance.”

More from Professional & Legal

Explore other vocabulary categories in this collection.